Claude Code Agent Monitor

A professional monitoring platform for Claude Code agent activity. Captures sessions, agents, and tool events via native hooks, persists them in SQLite, and streams updates to a React UI over WebSocket — with no external services required.

System Overview

Claude Code Agent Monitor integrates with Claude Code through its native hook system. When Claude Code performs any action — tool use, session start, subagent orchestration, session end — it fires a hook that calls a small Node.js script bundled with this project. That script forwards the event over HTTP to the dashboard server, which stores it in SQLite and broadcasts it to the browser over WebSocket.

End-to-end data pipeline from Claude Code to the browser

The server binds to 0.0.0.0 but everything runs on your machine. No

data leaves your system. No API keys. No external services.

What's Included

Every feature is driven by real hook events — nothing is hardcoded or simulated in production mode.

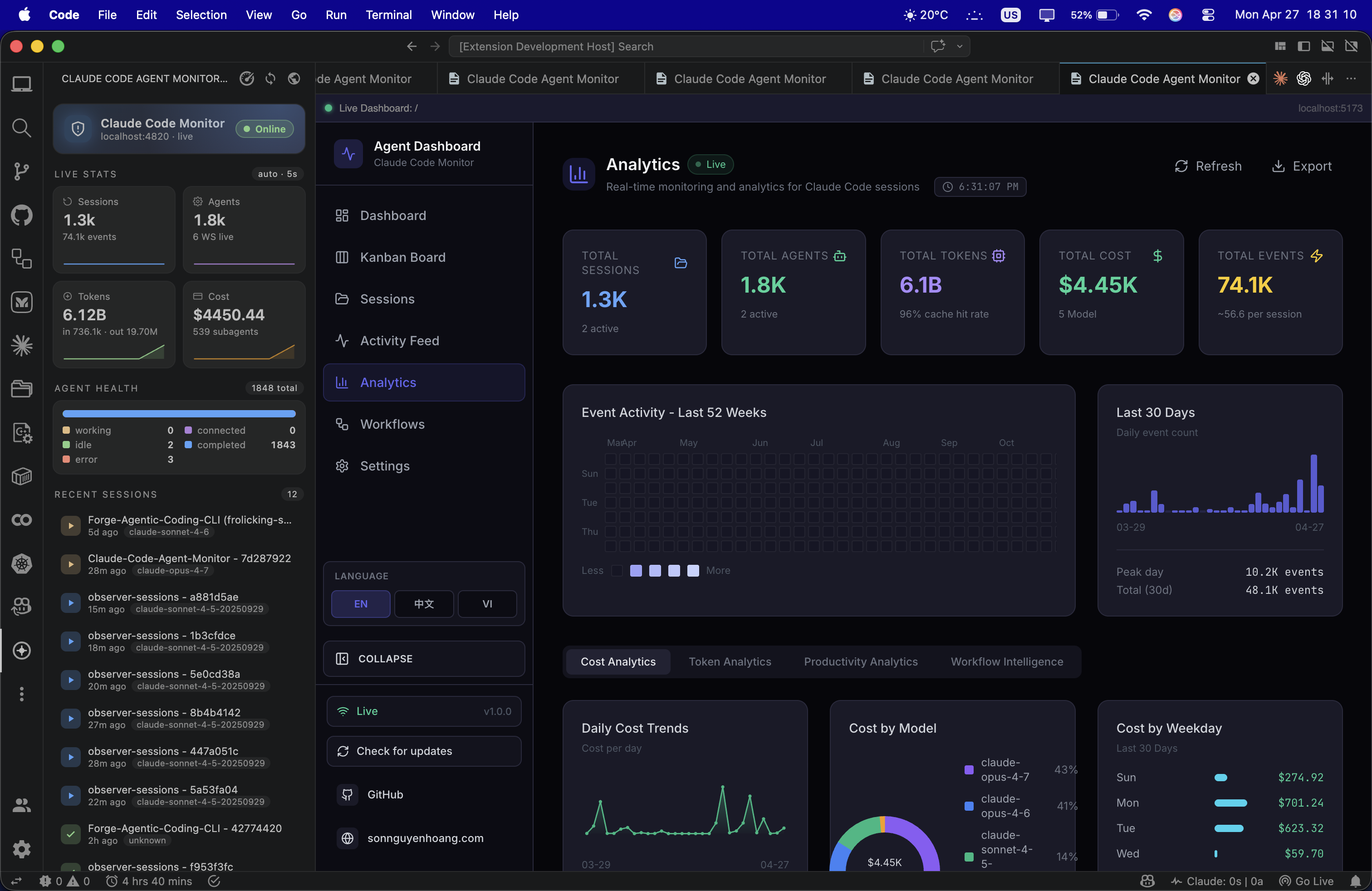

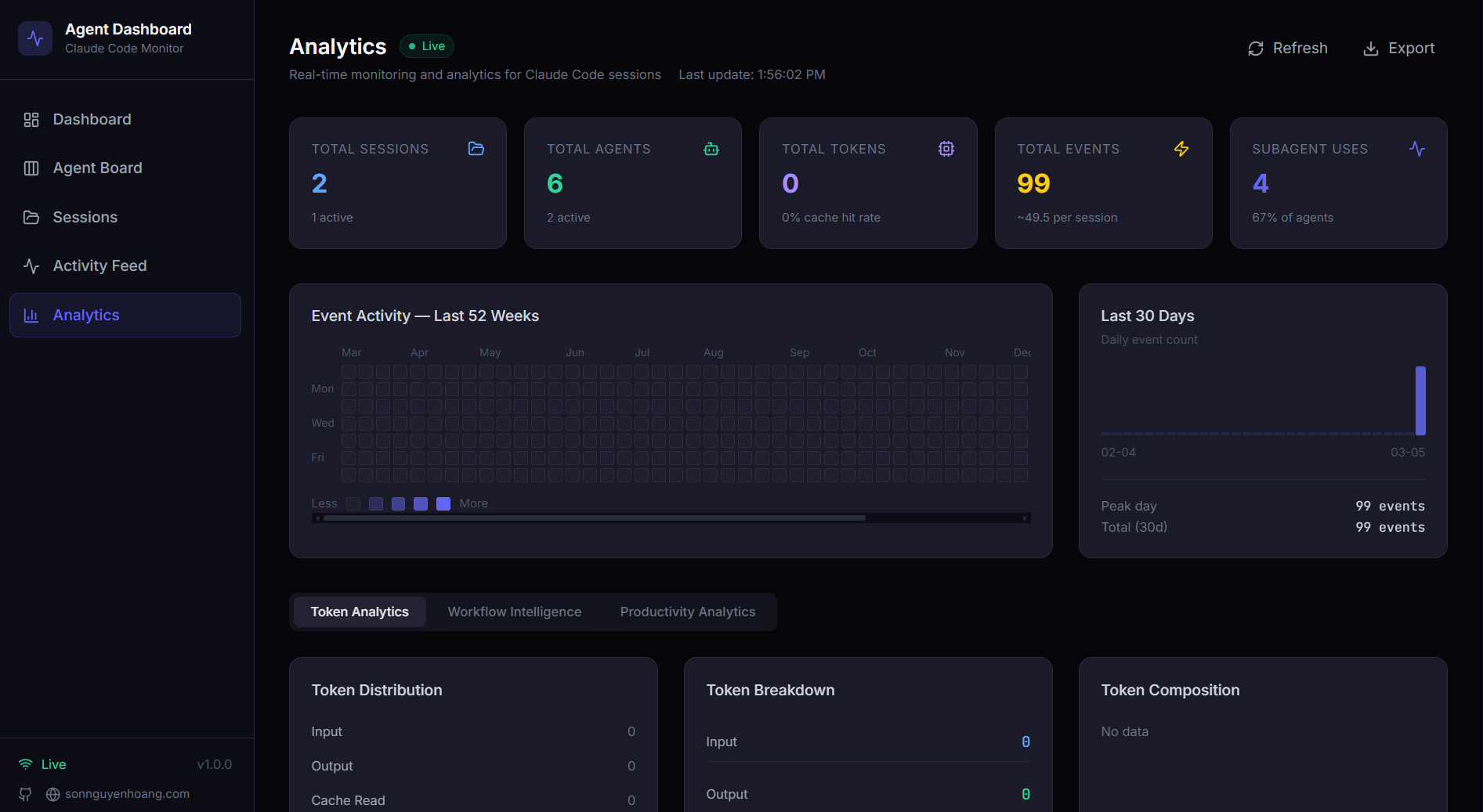

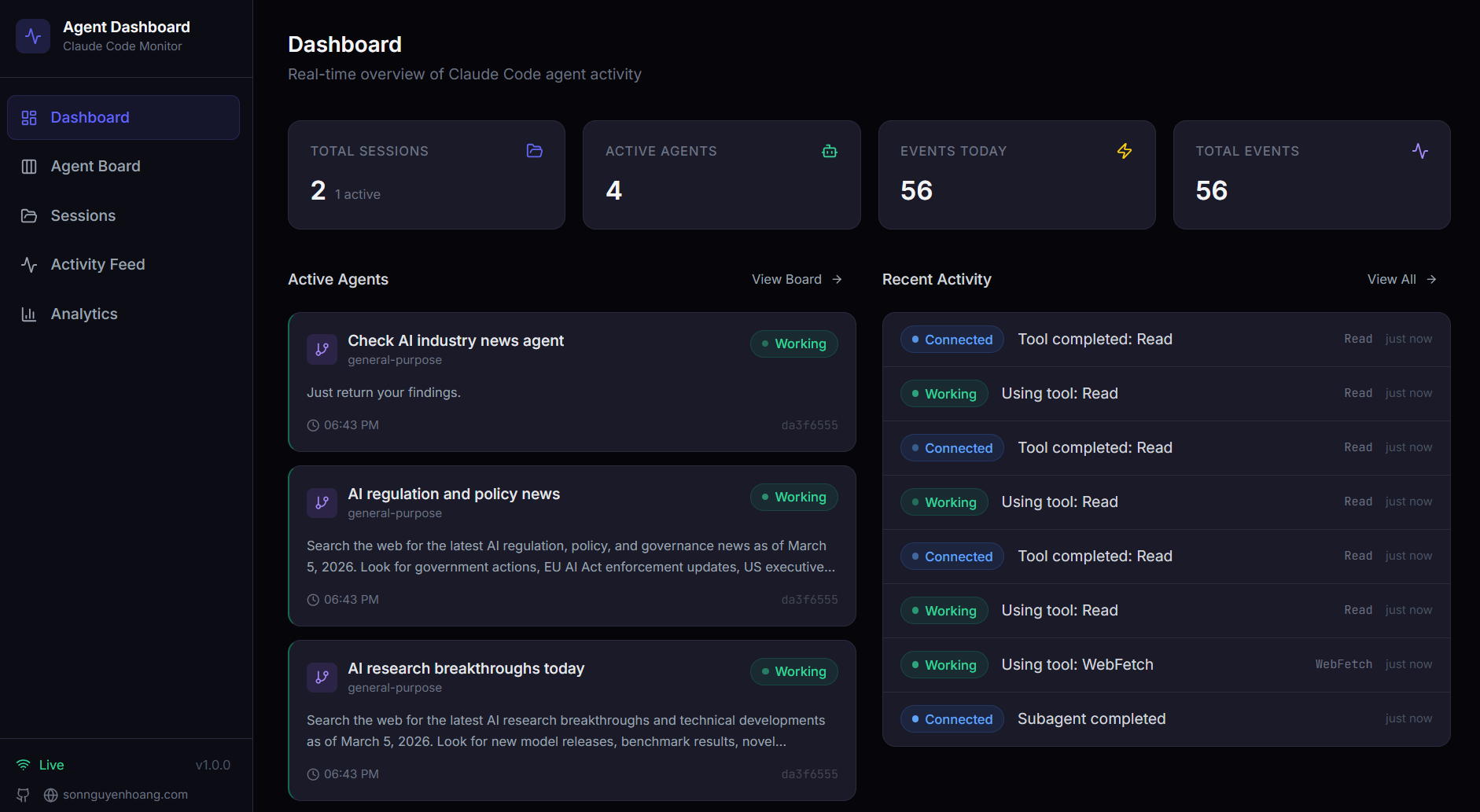

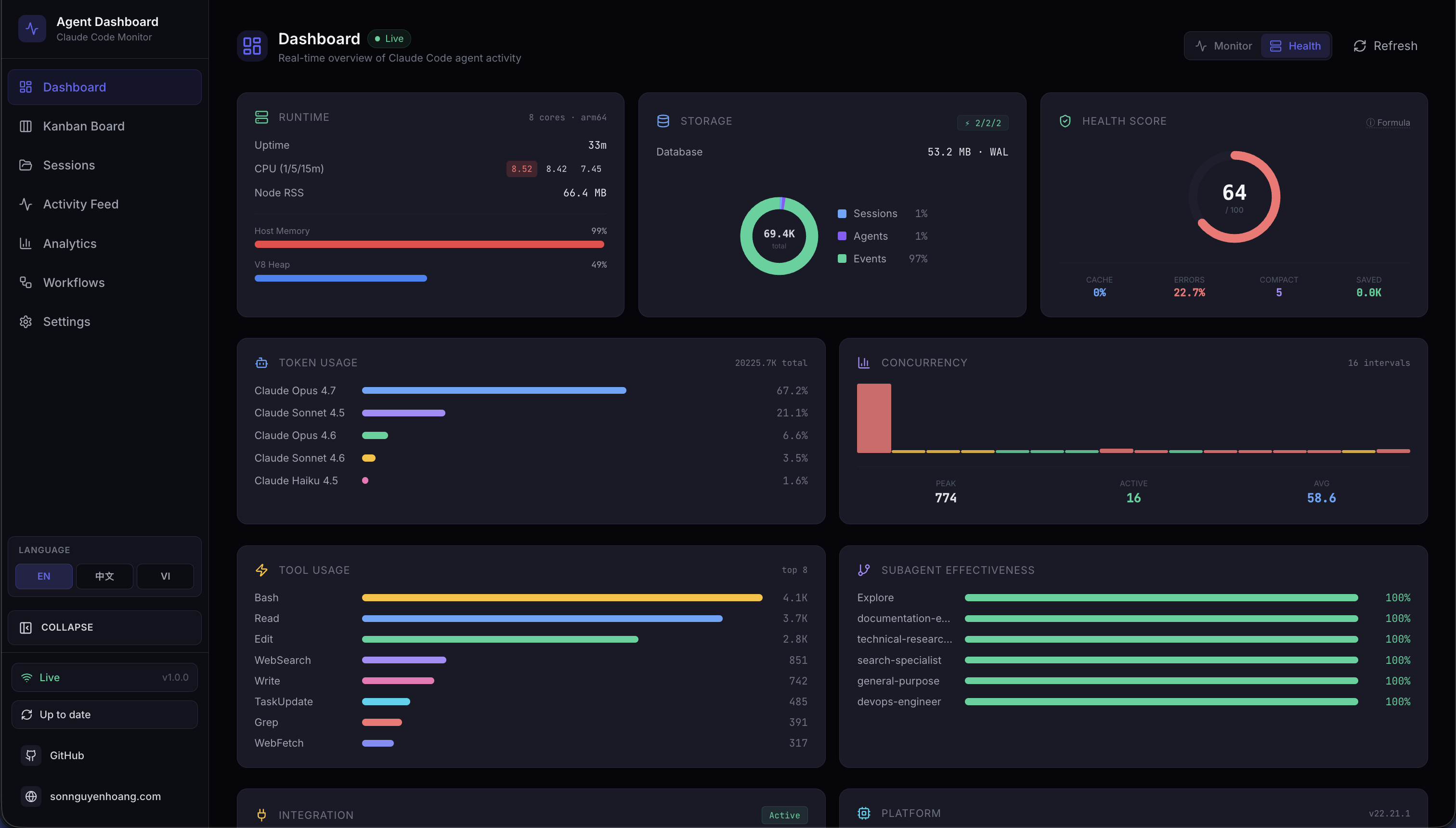

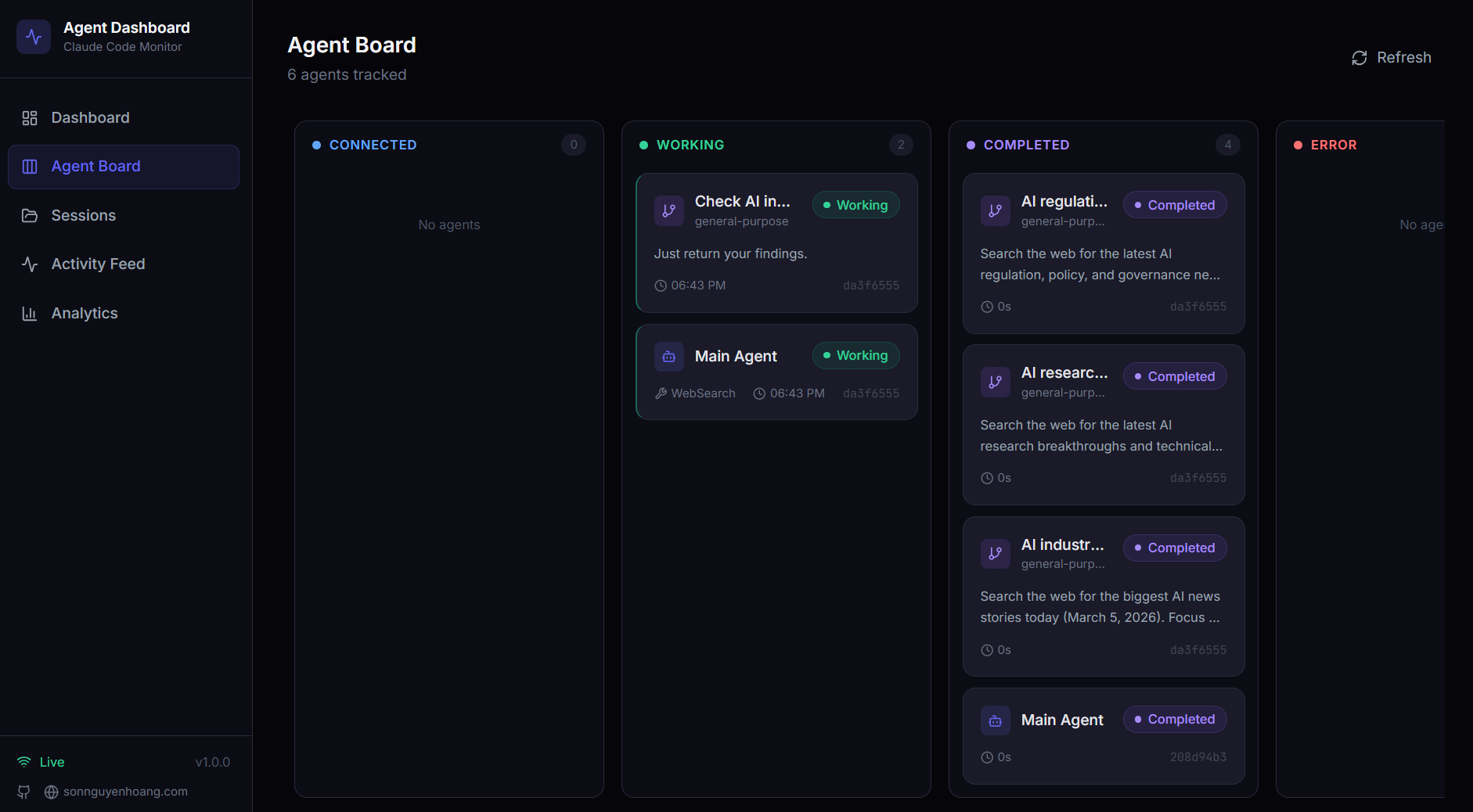

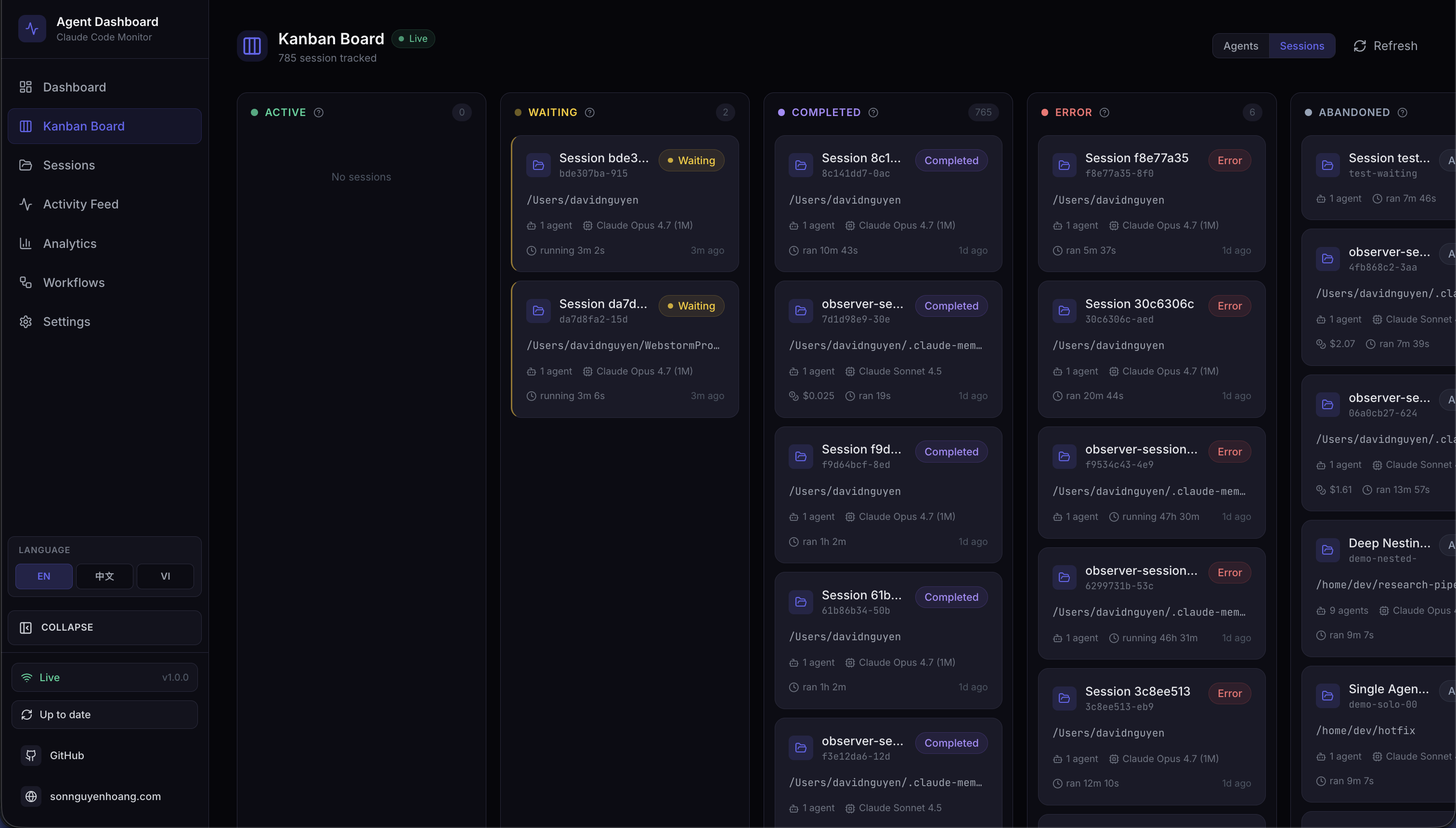

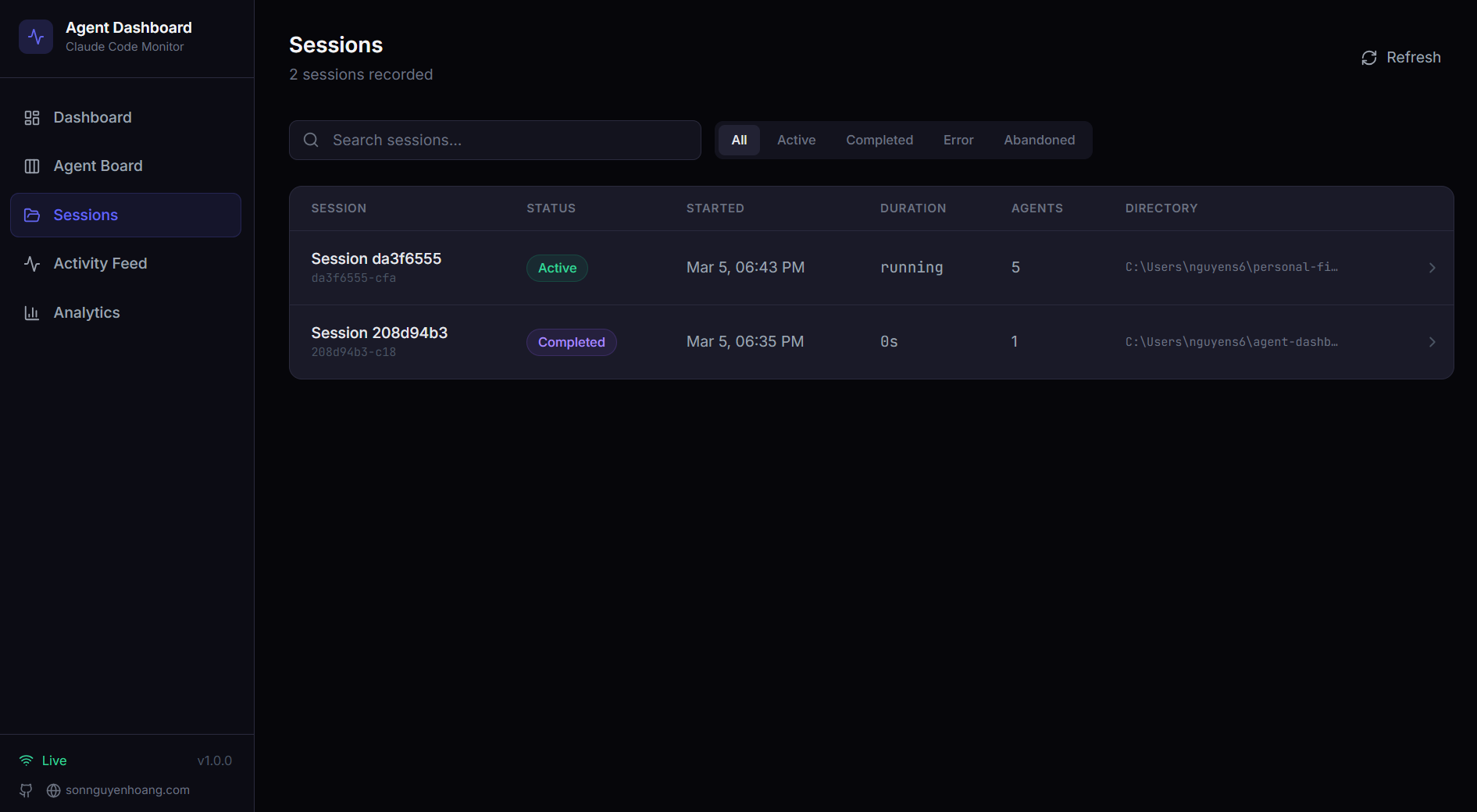

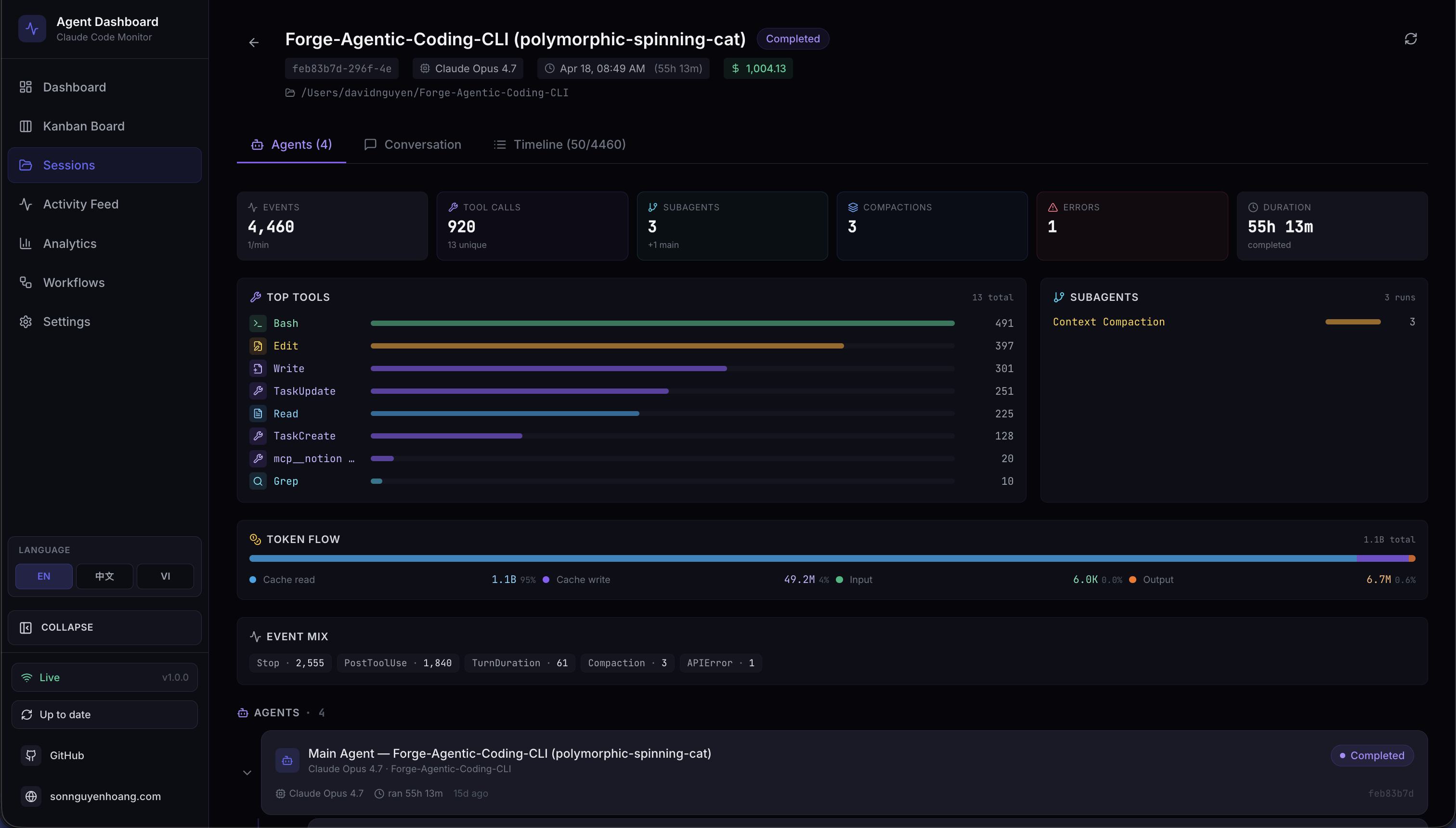

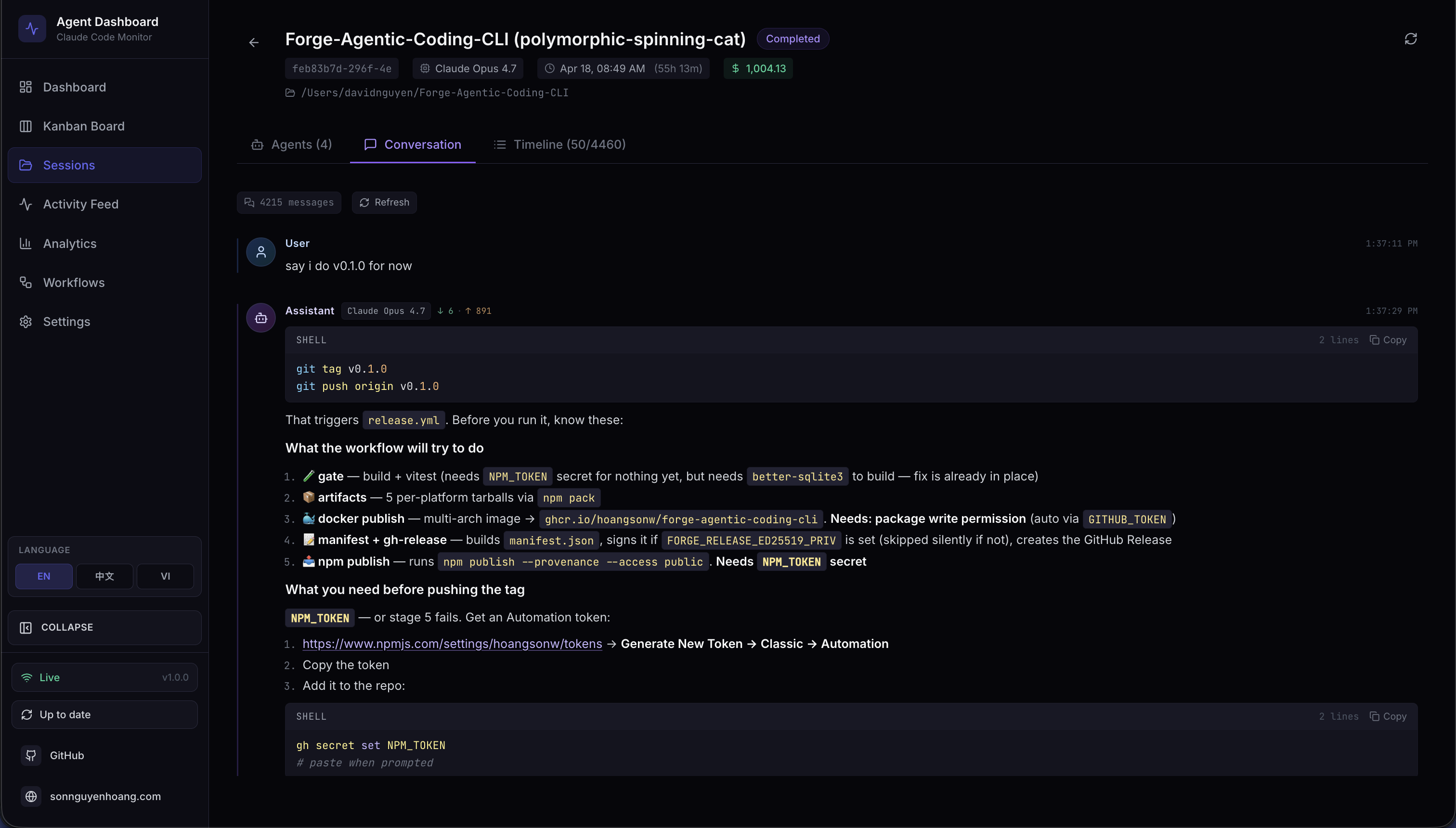

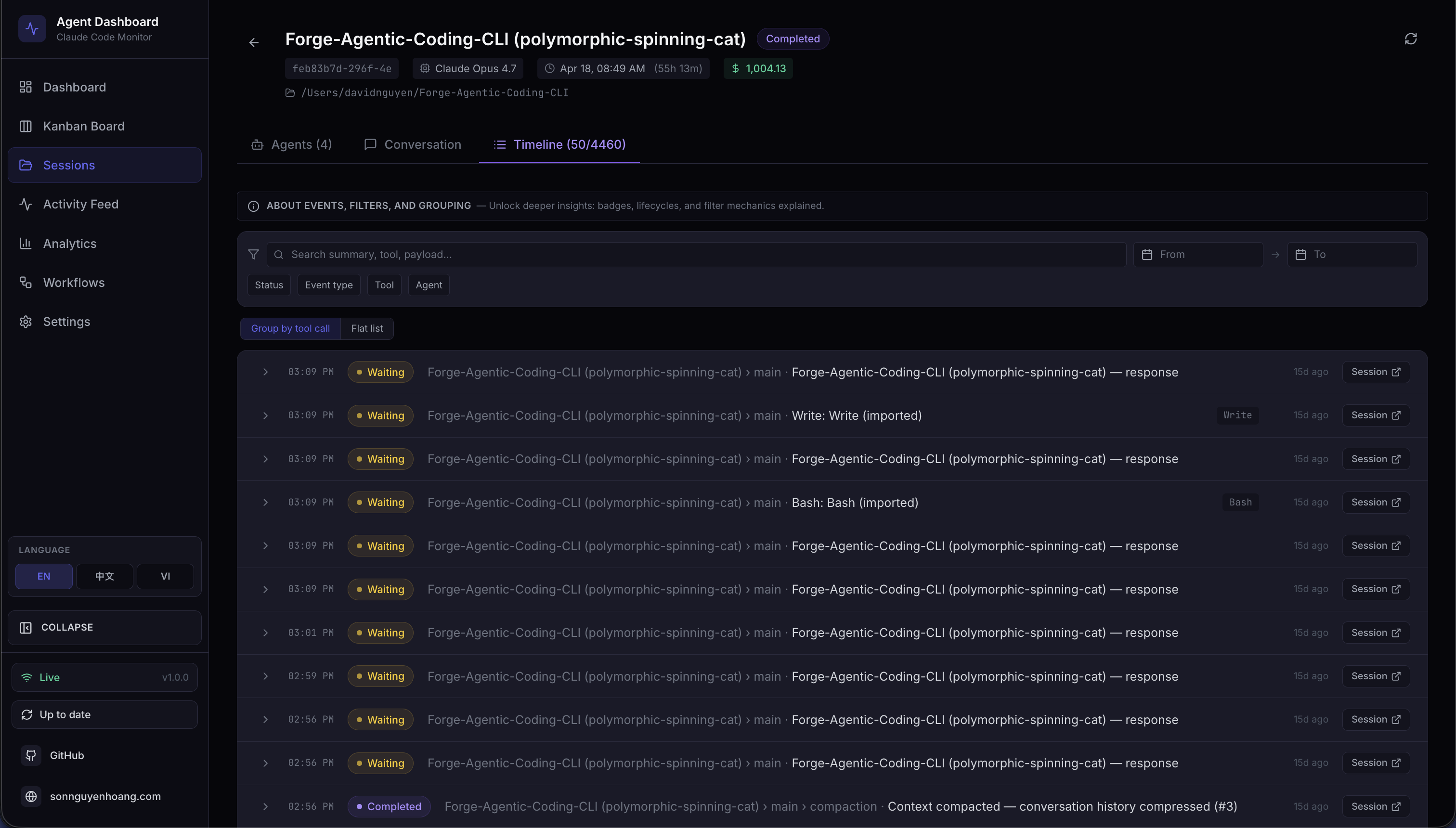

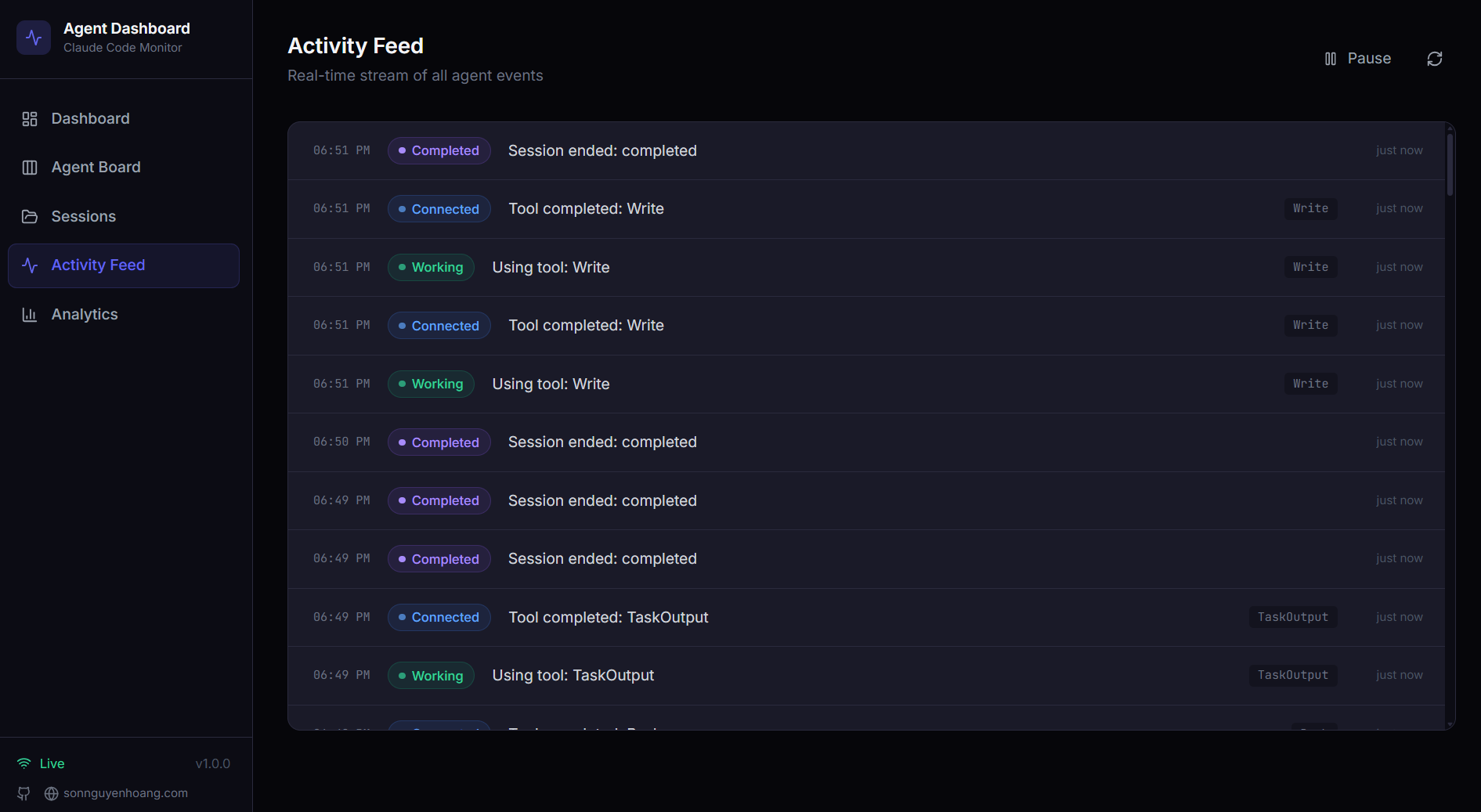

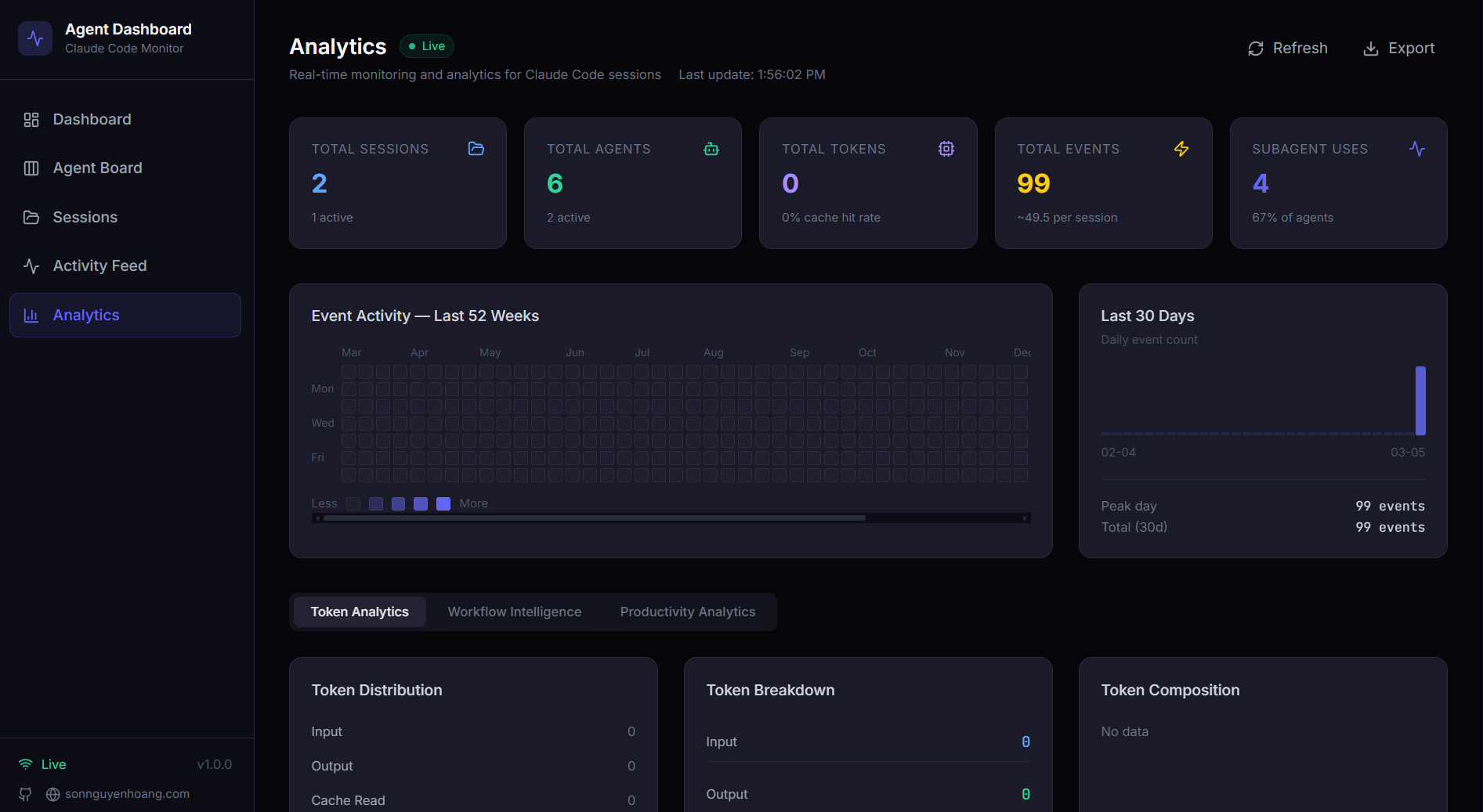

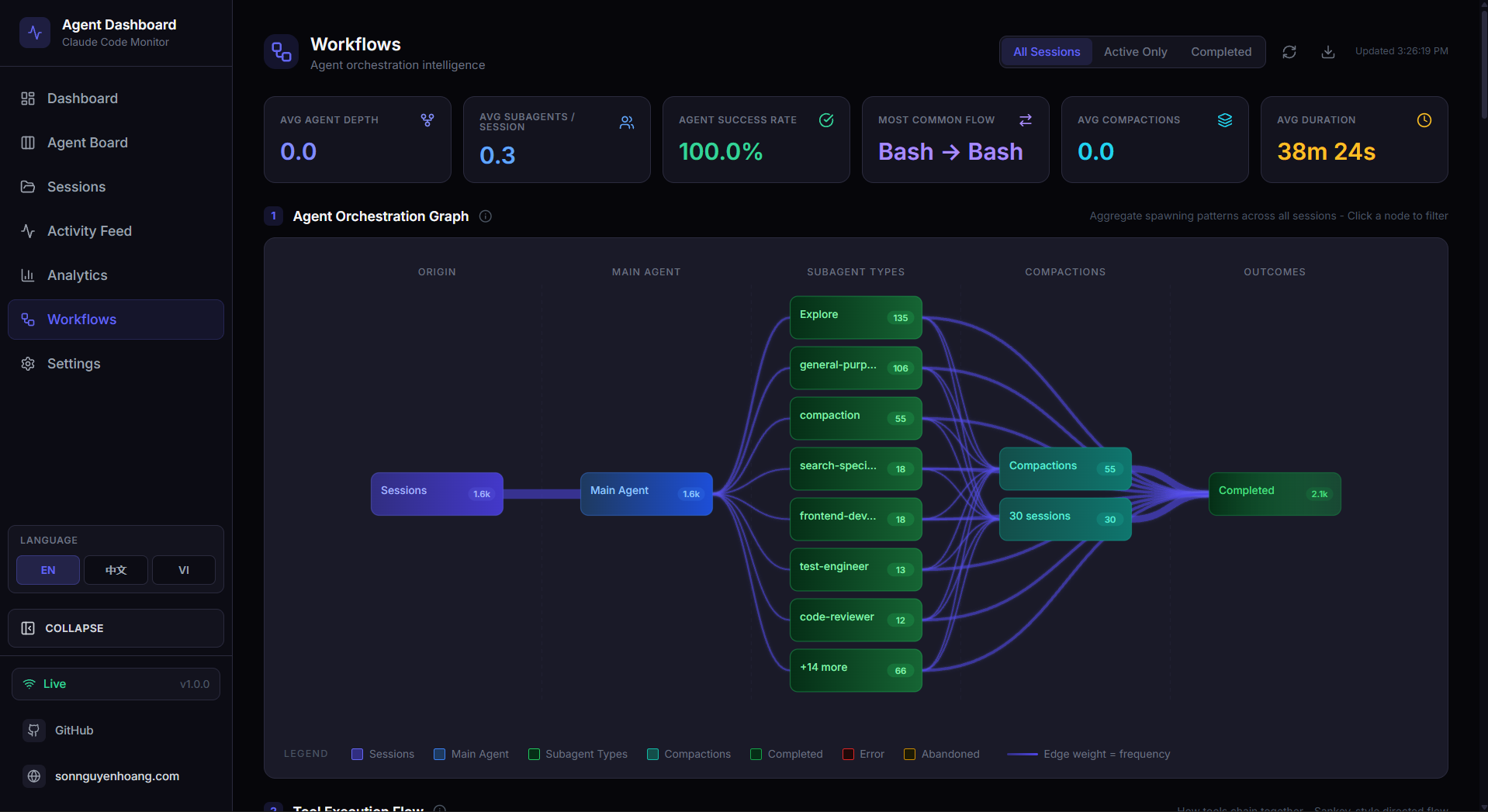

Screenshots

GitHub Star History

This chart tracks how interest in Claude Code Agent Monitor has grown over time. The curve keeps climbing as more developers discover the project, share it, and use it in real workflows. Each new star is a small vote of confidence from the community.

Hook Events Captured

| Hook Type | Trigger | Dashboard Action |

|---|---|---|

SessionStart |

Claude Code session begins |

Creates session and main agent. Stamps awaiting_input_since so the row lands in Waiting from the start (the CLI is at a prompt). Reactivates resumed sessions. Abandons orphaned sessions with no activity for DASHBOARD_STALE_MINUTES (default 180).

|

UserPromptSubmit |

User hits enter on a prompt |

Clears the waiting flag and promotes the main agent to

Working. The only reliable signal that text-only assistant turns have started — they emit no PreToolUse before Stop.

|

PreToolUse |

Agent begins using a tool |

Clears the waiting flag, sets agent →

Working, current_tool set. If tool is Agent, subagent record created.

|

PostToolUse |

Tool execution completes |

Clears the waiting flag (covers permission-prompt approvals mid-tool).

current_tool cleared. Agent stays

Working.

|

Stop |

Claude finishes a turn |

Non-error: main agent → waiting — UI shows

Waiting until the next user input. stop_reason=error: marks the agent and session

Error. Background subagents keep running.

|

SubagentStop |

Background agent finished |

Matched subagent →

Completed. Deliberately does not clear the waiting flag — a backgrounded subagent finishing tells us nothing about the human. Also kicks off a fire-and-forget JSONL scan (scanAndImportSubagents) that walks the session's subagents/agent-*.jsonl files, pairs tool_use ↔ tool_result blocks by tool_use_id, and emits per-tool PreToolUse + PostToolUse events under each subagent's own agent_id — surfaces tool calls that subagents make internally and which never fire any hooks.

|

Notification |

Agent sends notification | Event logged to activity feed. Permission/input-prompt patterns (e.g. "needs your permission", "waiting for your input") set the agent to waiting and stamp awaiting_input_since. Compaction-related notifications tagged as Compaction events. Triggers a browser notification if enabled. |

Compaction |

/compact detected in JSONL |

Creates a compaction subagent →

Completed. Detected via isCompactSummary entries in the transcript. Token baselines preserve pre-compaction totals. Periodic scanner (cadence ~¼ of DASHBOARD_STALE_MINUTES) catches compactions when no hooks fire.

|

APIError |

API error detected in transcript | Extracted from JSONL during history import, real-time transcript scanning, or the error detection watchdog. Captures quota limits, rate limits, auth failures, and other API errors. Immediately marks sessions and agents as error — previously recorded as events without changing status. |

TurnDuration |

Per-turn timing recorded | Extracted from JSONL turn boundaries. Records the duration of each assistant turn for latency analysis. |

SessionEnd |

Claude Code CLI process exits | Drops the waiting flag. If the session is already in Error, the error state is preserved; otherwise marks all agents and the session as Completed. Evicts the session's transcript from the shared cache. |

Quick Start

Clone

Clone the repository to your machine

Install

Run npm run setup to install all dependencies

Start

Run npm run dev — server + client launch automatically

Use Claude

Start a new Claude Code session — events appear in real-time

# 1. Clone

git clone https://github.com/hoangsonww/Claude-Code-Agent-Monitor.git

cd Claude-Code-Agent-Monitor

# 2. Install all dependencies (server + client)

npm run setup

# 3. Start in development mode

npm run dev

# → Express server on http://localhost:4820

# → Vite dev server on http://localhost:5173

# 4. (Optional) Build and run the local MCP server

npm run mcp:install

npm run mcp:build

npm run mcp:start # stdio (for MCP host integration)

npm run mcp:start:http # HTTP + SSE server on port 8819

npm run mcp:start:repl # interactive CLI with tab completion

# 5. Open the dashboard

# http://localhost:5173 (dev)

# http://localhost:4820 (prod after npm run build && npm start)Alternative: Docker / Podman

A multi-stage Dockerfile and docker-compose.yml are included.

You can run the monitor with either Docker or Podman and keep the SQLite database in a

named volume.

# Docker Compose

docker compose up -d --build

# Podman Compose

CLAUDE_HOME="$HOME/.claude" podman compose up -d --build

# Plain Docker / Podman

docker build -t agent-monitor .

docker run -d --name agent-monitor \

-p 4820:4820 \

-v "$HOME/.claude:/root/.claude:ro" \

-v agent-monitor-data:/app/data \

agent-monitor

When you run the server directly on the host with npm run dev or

npm start, it automatically writes Claude Code hook entries to

~/.claude/settings.json. If you run the dashboard in Docker or Podman,

install hooks from the host with npm run install-hooks after the

container is up, then restart Claude Code.

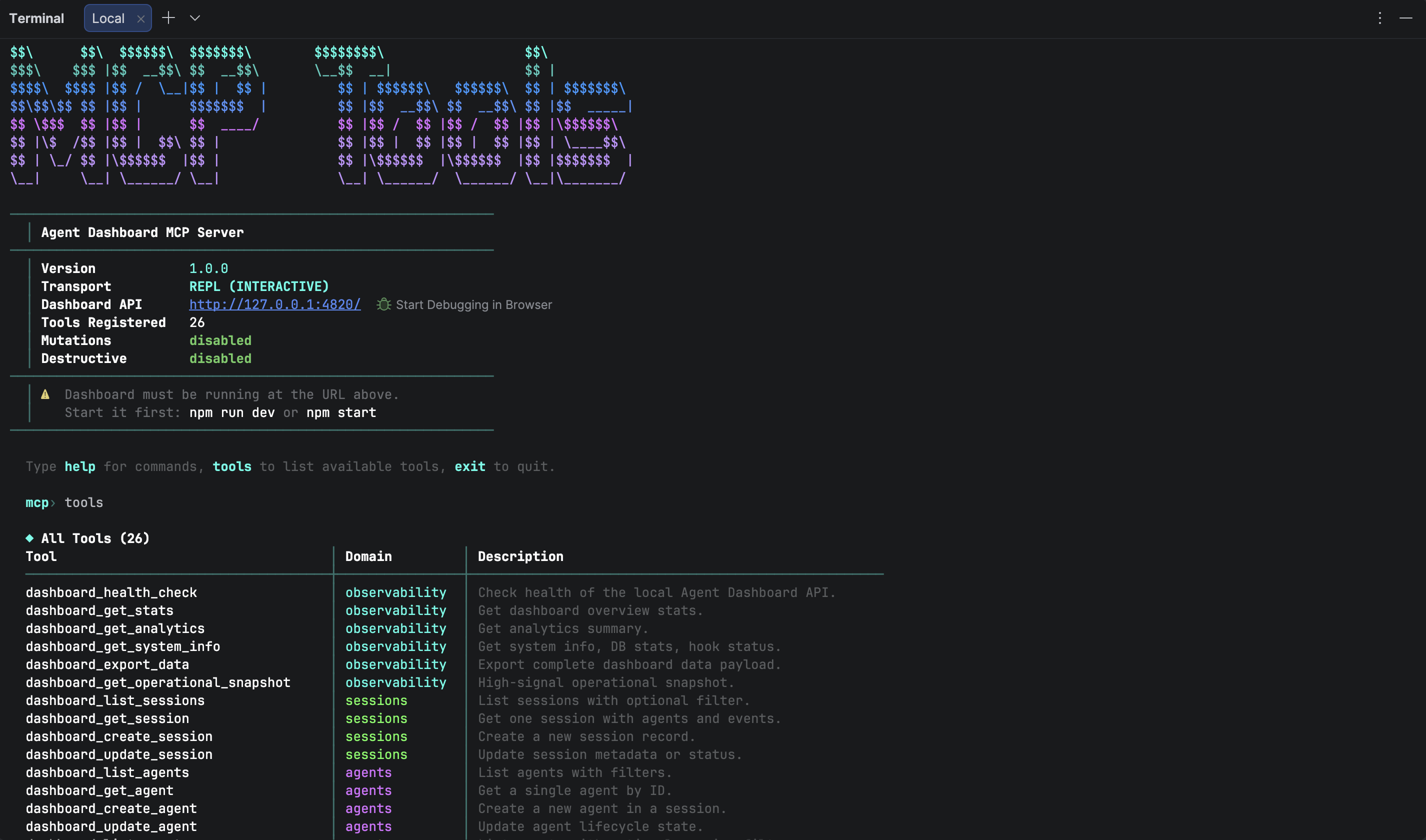

Optional: Enable MCP and Agent Extensions

This repository also ships a local MCP server under mcp/ and extension

scaffolding for both Claude Code and Codex. These are optional for the dashboard UI, but

recommended for complete local-agent workflows. The MCP server supports stdio (for host

integration), HTTP+SSE (for remote clients), and an interactive REPL (for operator

debugging).

# MCP lifecycle

npm run mcp:install

npm run mcp:build

npm run mcp:start # stdio (default — for MCP hosts)

npm run mcp:start:http # HTTP + SSE server on port 8819

npm run mcp:start:repl # interactive CLI with tab completionVerification

After starting a Claude Code session, you should see:

| Page | Expected |

|---|---|

| Sessions | Your session listed with status Waiting (a fresh CLI is sitting at the prompt) — flips to Active the moment Claude starts a turn |

| Kanban Board | A Main Agent card in the Waiting column until you type your first message; flips to Working on UserPromptSubmit / PreToolUse and back to Waiting after each Stop |

| Activity Feed | Events streaming in; click any row to expand payload, use "Session →" to drill into session details |

| Dashboard | Stats updating in real-time |

Hooks only fire to a running server. If Claude Code was already running when you started the dashboard, restart the Claude Code session.

Configuration

Environment Variables

| Variable | Default | Description |

|---|---|---|

DASHBOARD_PORT |

4820 |

Port the Express server listens on |

CLAUDE_DASHBOARD_PORT |

4820 |

Port used by the hook handler to reach the server (for custom port setups) |

MCP_DASHBOARD_BASE_URL |

http://127.0.0.1:4820 |

Base URL used by the local MCP server when calling dashboard APIs |

MCP_TRANSPORT |

stdio |

MCP transport mode: stdio, http, repl |

MCP_HTTP_PORT |

8819 |

Port for the MCP HTTP+SSE server (only when MCP_TRANSPORT=http) |

MCP_HTTP_HOST |

127.0.0.1 |

Bind address for the MCP HTTP server |

DASHBOARD_DB_PATH |

data/dashboard.db |

Path to the SQLite database file |

NODE_ENV |

development |

Set to production to serve built client from

client/dist/

|

Hook Configuration

The server writes the following to ~/.claude/settings.json on every

startup:

{

"hooks": {

"SessionStart": [

{ "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" SessionStart" }] }

],

"PreToolUse": [

{ "matcher": "*", "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" PreToolUse" }] }

],

"PostToolUse": [

{ "matcher": "*", "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" PostToolUse" }] }

],

"Stop": [{ "matcher": "*", "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" Stop" }] }],

"SubagentStop": [{ "matcher": "*", "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" SubagentStop" }] }],

"Notification": [{ "matcher": "*", "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" Notification" }] }],

"SessionEnd": [

{ "hooks": [{ "type": "command", "command": "node \"/path/to/scripts/hook-handler.js\" SessionEnd" }] }

]

}

}

Existing hooks are preserved. The installer only adds or updates entries containing

hook-handler.js.

Scripts Reference

| Script | Command | Description |

|---|---|---|

setup |

npm run setup |

Install all dependencies (server + client) |

dev |

npm run dev |

Start server + client in development mode with hot reload |

dev:server |

npm run dev:server |

Start only the Express server with --watch |

dev:client |

npm run dev:client |

Start only the Vite dev server |

build |

npm run build |

TypeScript check + Vite production build to client/dist/ |

start |

npm start |

Start Express in production mode serving built client |

install-hooks |

npm run install-hooks |

Manually write Claude Code hooks to ~/.claude/settings.json |

seed |

npm run seed |

Insert demo sessions, agents, and events (8 sessions / 23 agents / 106 events) |

import-history |

npm run import-history |

Import historical Claude Code sessions from ~/.claude with deep JSONL extraction (API errors, turn durations, thinking blocks, subagent data) |

clear-data |

npm run clear-data |

Delete all data from the database (keeps schema) |

test |

npm test |

Run all server and client tests |

test:server |

npm run test:server |

Server integration tests only (Node built-in test runner) |

test:client |

npm run test:client |

Client unit tests only (Vitest + Testing Library) |

mcp:install |

npm run mcp:install |

Install dependencies for the local MCP package under mcp/ |

mcp:typecheck |

npm run mcp:typecheck |

Type-check MCP source without emitting build output |

mcp:build |

npm run mcp:build |

Compile MCP server into mcp/build/ |

mcp:start |

npm run mcp:start |

Start MCP server (stdio transport — for MCP hosts) |

mcp:start:http |

npm run mcp:start:http |

Start MCP HTTP+SSE server on port 8819 (Streamable HTTP + legacy SSE) |

mcp:start:repl |

npm run mcp:start:repl |

Start interactive MCP REPL with tab completion and colored output |

mcp:dev |

npm run mcp:dev |

Run MCP server in dev mode with tsx (stdio) |

mcp:dev:http |

npm run mcp:dev:http |

Run MCP HTTP server in dev mode with tsx |

mcp:dev:repl |

npm run mcp:dev:repl |

Run MCP REPL in dev mode with tsx |

mcp:docker:build |

npm run mcp:docker:build |

Build MCP container image with Docker (agent-dashboard-mcp:local) |

mcp:podman:build |

npm run mcp:podman:build |

Build MCP container image with Podman

(localhost/agent-dashboard-mcp:local)

|

test:mcp |

npm run test:mcp |

Run MCP server unit tests |

format |

npm run format |

Format all files with Prettier |

format:check |

npm run format:check |

Check formatting without writing |

System Architecture

Core dashboard telemetry is composed of three processes (Claude hook source, dashboard server, browser UI). When the local MCP sidecar is enabled, it integrates with the same dashboard API via stdio, HTTP+SSE, or interactive REPL transport.

Full system architecture — Claude Code process → Hook Layer → Server → Browser

Agent State Machine

Agent status transitions driven by hook events. waiting is a real persisted status — agents start as waiting and return to it after each turn. Error recovery requires active user retry (UserPromptSubmit or PreToolUse). A background watchdog detects API errors in transcripts every 15 s.

Session State Machine

Session status lifecycle. waiting is a UI overlay — persisted as active with awaiting_input_since set. SessionEnd preserves error state. Error recovery requires UserPromptSubmit or PreToolUse.

Data Flow

Event Ingestion Pipeline

Parses JSON and adds hook_type HH->>API: POST {"hook_type":"PreToolUse","data":{...}} API->>TX: BEGIN TRANSACTION TX->>TX: ensureSession(session_id) Note over TX: Creates session + main agent

on first contact TX->>TX: process by hook_type Note over TX: SessionStart -> stamp awaiting flag (Waiting)

UserPromptSubmit -> clear flag, agent working

PreToolUse -> clear flag, agent working

PostToolUse -> clear flag, clear current_tool

Stop (non-error) -> agent waiting

Stop (error) -> agent error, session error

SubagentStop -> complete matched subagent (does NOT touch flag);

fire-and-forget scanAndImportSubagents emits

per-tool Pre/PostToolUse events under each

subagent's own agent_id

Notification (permission) -> stamp flag

SessionEnd -> drop flag, mark all completed TX->>TX: insertEvent(...) TX->>TX: COMMIT API->>WS: broadcast("agent_updated", agent) API->>WS: broadcast("new_event", event) WS->>UI: {"type":"agent_updated","data":{...}} UI->>UI: eventBus.publish -> page re-renders

Complete event ingestion from hook fire to browser re-render

Client Data Loading Pattern

Initial load + WebSocket subscription lifecycle

Server Architecture

Express app + HTTP server"] DB["server/db.js

SQLite + prepared statements

better-sqlite3 / node:sqlite fallback"] WS["server/websocket.js

WS server + heartbeat"] HOOKS["routes/hooks.js

Hook event processing"] SESSIONS["routes/sessions.js

Session CRUD"] AGENTS["routes/agents.js

Agent CRUD"] EVENTS["routes/events.js

Event listing"] STATS["routes/stats.js

Aggregate queries"] ANALYTICS["routes/analytics.js

Analytics metrics"] PRICING["routes/pricing.js

Pricing CRUD + cost calc"] SETTINGS_R["routes/settings.js

System info + data mgmt"] WORKFLOWS_R["routes/workflows.js

Workflow visualizations"] INDEX --> DB INDEX --> WS INDEX --> HOOKS INDEX --> SESSIONS INDEX --> AGENTS INDEX --> EVENTS INDEX --> STATS INDEX --> ANALYTICS INDEX --> PRICING INDEX --> SETTINGS_R INDEX --> WORKFLOWS_R HOOKS --> DB HOOKS --> WS SESSIONS --> DB SESSIONS --> WS AGENTS --> DB AGENTS --> WS EVENTS --> DB STATS --> DB ANALYTICS --> DB PRICING --> DB SETTINGS_R --> DB WORKFLOWS_R --> DB

Server module dependency graph

Server Modules

| Module | Responsibility |

|---|---|

server/index.js |

Express app setup, middleware (CORS, JSON 1MB limit), route mounting, static serving in production, HTTP server, auto-hook installation on startup |

server/db.js |

SQLite connection, WAL/FK pragmas, schema migrations (CREATE TABLE IF NOT EXISTS), all prepared statements as a reusable stmts object. Tries

better-sqlite3 first, falls back to built-in

node:sqlite via compat-sqlite.js

|

server/compat-sqlite.js |

Compatibility wrapper giving Node.js built-in node:sqlite

(DatabaseSync) the same API as better-sqlite3 —

pragma, transaction, prepare. Used as automatic fallback on Node 22+

|

server/websocket.js |

WebSocket server on /ws path, 30s ping/pong heartbeat, typed

broadcast(type, data) function

|

routes/hooks.js |

Core event processing inside SQLite transactions. Auto-creates sessions/agents. Switch-case dispatch by hook type. Extracts token usage from Stop events. |

routes/sessions.js |

CRUD with pagination. GET includes agent count via LEFT JOIN. POST is idempotent on session ID. |

routes/agents.js |

CRUD with status/session_id filtering. PATCH broadcasts

agent_updated.

|

routes/events.js |

Read-only event listing with session_id filter and pagination. |

routes/stats.js |

Single aggregate query — total/active counts, status distributions, WS connection count. |

routes/analytics.js |

Extended analytics — token totals, tool usage counts, daily event/session trends, agent type distribution, event type breakdown, average events per session. |

routes/pricing.js |

Model pricing CRUD (list, upsert, delete). Per-session and global cost calculation with pattern-based model matching and specificity sorting. |

routes/settings.js |

System info (DB size, row counts, hook status, server uptime). Data export as JSON. Session cleanup (abandon stale active sessions, purge old completed sessions). Clear all data. Reset pricing to defaults. Reinstall hooks. |

routes/workflows.js |

Aggregate workflow visualization data (agent orchestration, tool transitions,

collaboration networks, workflow patterns, model delegation, error propagation,

concurrency, session complexity, compaction impact). Accepts

?status=active|completed filter. Per-session drill-in with

agent tree, tool timeline, and events.

|

Client Architecture

React component tree

Client Routes

Key Client Modules

| Module | Purpose |

|---|---|

lib/api.ts |

Typed fetch wrapper — one method per REST endpoint. All return typed promises. |

lib/types.ts |

TypeScript interfaces: Session, Agent,

DashboardEvent, Stats, Analytics,

WSMessage, plus all workflow-related types

(WorkflowData, SessionDrillIn, etc). Status config

maps.

|

lib/eventBus.ts |

Set-based pub/sub. subscribe(fn) returns an unsubscribe function

for clean useEffect teardown.

|

lib/format.ts |

Date/time formatting helpers — relative time, duration, ISO display. |

hooks/useWebSocket.ts |

Auto-reconnecting WebSocket React hook. 2-second reconnect interval. Publishes messages to eventBus. |

State Management

The client deliberately avoids Redux / Zustand / Context. Each page owns its data and lifecycle. WebSocket events trigger a reload or append — no complex state merging.

Each page pulls initial data from REST then subscribes to eventBus for live updates

There is no cross-page shared state. Each page fetches and owns exactly the data it displays. This simplifies debugging and avoids stale-closure hazards that are common with global stores in long-running WebSocket apps.

Database Design

Entity Relationship Diagram — SQLite schema

Indexes

| Index | Table | Column(s) | Purpose |

|---|---|---|---|

idx_agents_session |

agents | session_id |

Fast agent lookup by session |

idx_agents_status |

agents | status |

Kanban column queries |

idx_events_session |

events | session_id |

Session detail event list |

idx_events_type |

events | event_type |

Filter events by type |

idx_events_created |

events | created_at DESC |

Activity feed ordering |

idx_sessions_status |

sessions | status |

Status filter on sessions page |

idx_sessions_started |

sessions | started_at DESC |

Default sort order |

SQLite Configuration

| Pragma | Value | Rationale |

|---|---|---|

journal_mode |

WAL |

Concurrent reads during writes. Far better for read-heavy dashboards. |

foreign_keys |

ON |

Referential integrity — prevents orphaned agents/events. |

busy_timeout |

5000 |

Wait up to 5s for write lock instead of failing immediately under load. |

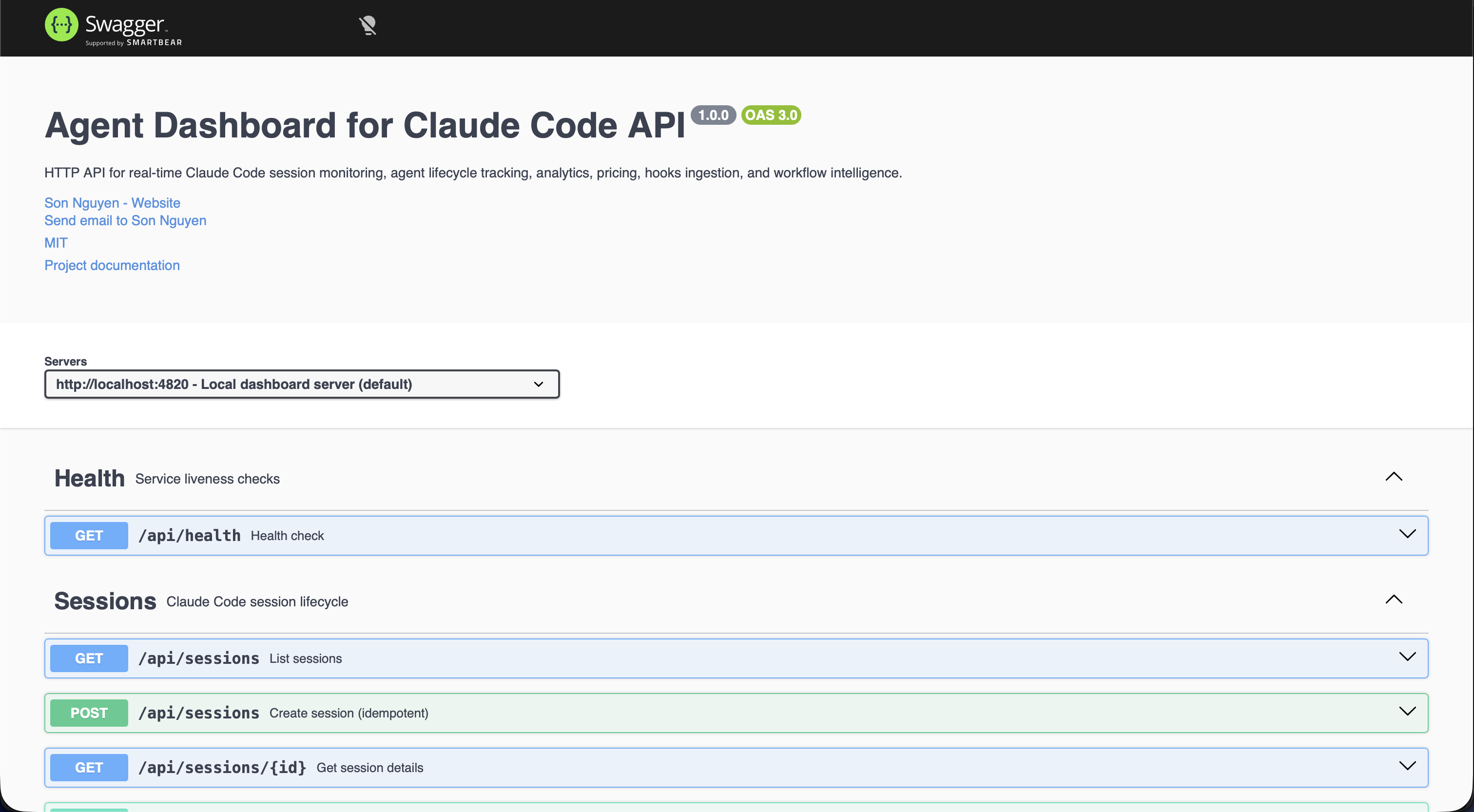

API Reference

All endpoints return JSON. Errors follow

{ "error": { "code", "message" } }. The full OpenAPI 3.0 spec is

served at /api/openapi.json and rendered as interactive Swagger UI

at /api/docs.

/api/docs — interactive playground for every endpointHealth

{ "status": "ok", "timestamp": "..." }

Sessions

status, q (case-insensitive search across

id/name/cwd), limit

(default 50, max 10000), offset. Response includes

total for paginators.

id)

Agents

status, session_id,

limit, offset

Events, Stats, Analytics

session_id, limit,

offset

Hooks Ingestion

Pricing

Settings

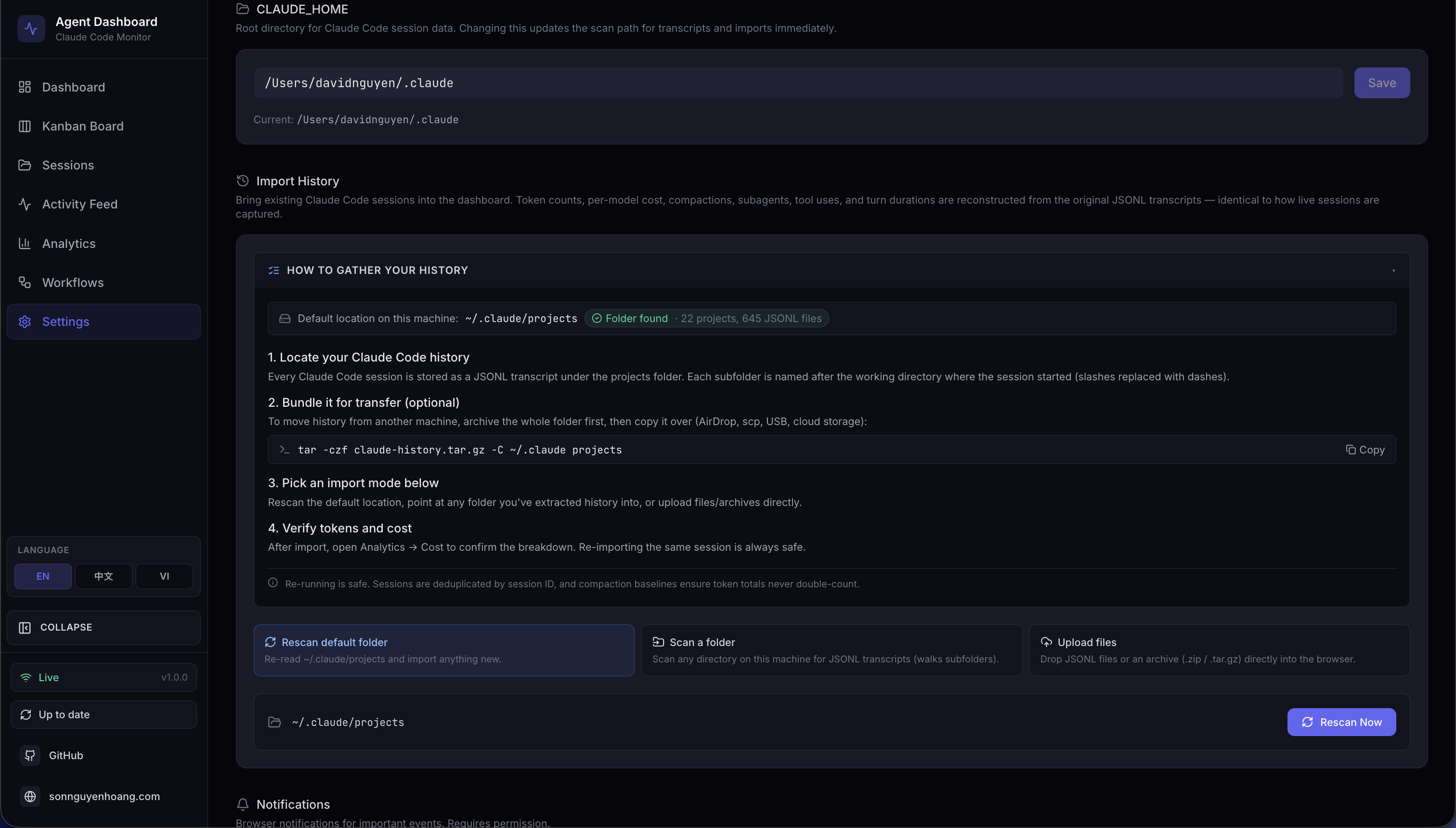

Import History

~/.claude/projects folder

~/.claude/projects directory; safe to re-run

(idempotent via session-ID dedup)

{ path }); tilde

(~) is expanded; walks subdirectories recursively and imports every

.jsonl found

.jsonl, .meta.json,

.zip, .tar, .tar.gz, .tgz,

.gz. Per-request staging dir, path-traversal and extraction-size

guards. Returns 413 EXTRACTION_LIMIT_EXCEEDED on suspected

bomb archives

Workflows

?status=active|completed query param

to filter by workflow status

{

"hook_type": "PreToolUse",

"data": {

"session_id": "abc-123",

"tool_name": "Bash",

"tool_input": { "command": "ls -la" }

}

}WebSocket Protocol

| Property | Value |

|---|---|

| Path | ws://localhost:4820/ws |

| Protocol | Standard WebSocket (RFC 6455) |

| Heartbeat | Server pings every 30s — clients that don't pong are terminated |

| Reconnect | Client retries every 2 seconds on disconnect |

Message Envelope

// All messages use this shape

{

type: "session_created" | "session_updated" |

"agent_created" | "agent_updated" | "new_event";

data: Session | Agent | DashboardEvent;

timestamp: string; // ISO 8601

}Client WebSocket auto-reconnect state machine

Hook Integration

Hook Handler Design

scripts/hook-handler.js is a minimal, fail-safe forwarder. It always exits

0 so it can never block Claude Code regardless of server state.

hook-handler.js flow — always exits 0, never blocks Claude Code

Hook Installation Flow

Hook installation is idempotent — safe to run multiple times

Import Pipeline

The dashboard ships with a first-class history importer that backfills

sessions, agents, events, tokens, and costs from Claude Code JSONL transcripts.

Live hook ingestion and manual import share the exact same parser —

parseSessionFile + importSession in

scripts/import-history.js — which is the architectural contract that

guarantees imported token totals and cost values are identical to those captured in

real time. Re-imports are idempotent: session IDs are the dedup key and compaction

baseline_* columns preserve pre-compaction token totals.

Three Modes, One Pipeline

All three modes funnel into the same parser and DB transaction — imported numbers match live capture bit-for-bit

Upload Request Sequence

Upload path: multipart → safe extract → walk → parse → import — every temp dir

reclaimed in finally

Idempotence & Cost Accuracy

The baseline_* columns make cost monotonic under re-imports —

compacted sessions retain pre-compaction usage for billing

Supported Source Layouts

| Layout | Example | Handling |

|---|---|---|

| Default Claude Code | <proj>/<sid>.jsonl |

Session transcript — extracts tokens, compactions, tool uses, turn durations |

| Default subagent | <proj>/<sid>/subagents/agent-*.jsonl |

Paired with parent on discovery via findSessionSubagents |

| Alternative subagent | <proj>/subagents/<sid>/agent-*.jsonl |

Paired with parent on discovery (second layout probed automatically) |

| Orphan subagent | No parent JSONL in source, but sid exists in DB |

importFromDirectory probes both layouts; attaches if the parent is found |

| Flat JSONL drop | <root>/<sid>.jsonl |

Recognized as a loose session transcript |

| Archives | .zip, .tar, .tar.gz, .tgz |

Extracted into a per-request temp dir, then walked by the same importer |

| Single-file gzip | any.jsonl.gz |

Gunzipped in streaming mode with running byte-counter size cap |

Safety Model

| Threat | Mitigation |

|---|---|

| Path traversal via archive entries | archive.safeJoin resolves under the extraction root; any .. or absolute path returns null |

| Zip / tar / gzip bombs | MAX_EXTRACT_BYTES (default 4 GB) enforced by running byte counter; aborts with ExtractionLimitError → HTTP 413 |

| Per-file upload size abuse | multer limits.fileSize = MAX_UPLOAD_BYTES (default 1 GB) |

| Too many files per request | multer limits.files = MAX_UPLOAD_FILES (default 2000) |

| Unsupported file types | fileFilter drops them early and reports them in rejected_files[] |

| Concurrent upload temp-dir collisions | Per-request temp dir on req._ccamUploadDir; created in multer destination, reclaimed in finally |

Arbitrary absolute path on scan-path |

Validated: must be absolute (after ~ expansion), exist, and be a directory |

Relative / traversal paths on scan-path |

Rejected with INVALID_INPUT |

Environment Variables

| Variable | Default | Purpose |

|---|---|---|

CCAM_IMPORT_MAX_BYTES |

1 GB | Maximum size per uploaded file on /api/import/upload |

CCAM_IMPORT_MAX_FILES |

2000 | Maximum files per upload request |

CCAM_IMPORT_MAX_EXTRACT_BYTES |

4 GB | Ceiling on total uncompressed bytes from any single archive (zip-bomb defense) |

WebSocket Progress Events

Every import emits import.progress messages on /ws. Messages

are throttled to at most one every ~150 ms to avoid flooding the channel on

multi-thousand-session imports; the terminal complete and

error frames are never throttled.

{

"type": "import.progress",

"timestamp": "2026-04-18T15:48:34.123Z",

"data": {

"importId": "upload-1729264114000",

"phase": "parse",

"source": "upload",

"processed": 184,

"total": 512,

"current": "/tmp/ccam-import-work-xyz/project/<uuid>.jsonl",

"counters": { "imported": 120, "backfilled": 40, "skipped": 20, "errors": 4 }

}

}

Phases: start → scan → extract (upload only)

→ parse → complete, with error /

extract_error replacing complete on failure.

MCP & Agent Extensions

In addition to dashboard telemetry, this project includes a production-grade local MCP server and complete extension scaffolding for both Claude Code and Codex. This gives agents a richer local tool surface while keeping all execution local-first. The MCP server supports three transport modes: stdio for host integration, HTTP+SSE for remote clients, and an interactive REPL for operator debugging.

Local extension architecture: host instructions + skills + multi-transport MCP sidecar

Local MCP Server Runtime

The mcp/ package exposes dashboard-oriented tools for AI agents across three

transport modes. Mutation and destructive operations are policy-gated by environment

variables and disabled by default. HTTP mode serves both Streamable HTTP (protocol

2025-11-25) and legacy SSE (protocol 2024-11-05). REPL mode provides tab-completed

interactive tool invocation with colored output and JSON syntax highlighting.

| Component | Location | Notes |

|---|---|---|

| MCP source | mcp/src/ |

TypeScript server, tools, policy guards, transport layer, CLI UI |

| MCP build output | mcp/build/ |

Compiled JavaScript runtime for all transport modes |

| MCP docs | mcp/README.md |

Tool catalog, architecture diagrams, host integration examples, REPL guide |

| Transport layer | mcp/src/transports/ |

HTTP+SSE server, interactive REPL, tool handler collector |

| CLI UI | mcp/src/ui/ |

ANSI banner, colors, formatter with tables, boxes, JSON highlighting |

| Runtime commands | npm run mcp:start|start:http|start:repl|dev|dev:http|dev:repl |

Start MCP in stdio, HTTP+SSE, or REPL mode (production or dev) |

Agent Extension Layout

| Target | Files | Purpose |

|---|---|---|

| Claude Code | CLAUDE.md, .claude/rules/ |

Persistent project instructions + path-scoped coding rules |

| Claude Code Skills | .claude/skills/ |

Reusable workflows (onboarding, shipping, MCP ops, live debugging) |

| Claude Code Subagents | .claude/agents/ |

Specialized reviewers for backend, frontend, and MCP code paths |

| Codex Base Instructions | AGENTS.md, .codex/rules/default.rules |

Project-wide guidance + execution policy defaults |

| Codex Skills | .codex/skills/ |

Task-specific skills aligned to this repository |

| Codex Agents | .codex/agents/ |

Reusable custom-agent templates for implementation and review |

Root Helper Scripts

| Script | Role |

|---|---|

scripts/hook-handler.js |

Receives Claude hook payloads over stdin and forwards them to dashboard API |

scripts/install-hooks.js |

Writes/updates hook registration in ~/.claude/settings.json |

scripts/import-history.js |

Batch history importer used by server startup auto-import, the /api/import/* routes, and the import-history CLI. Exposes importAllSessions() for the default projects dir and the generalized importFromDirectory(dbModule, rootDir, {onProgress}) which walks any directory recursively, classifies session vs subagent JSONLs (probes both <proj>/<sid>/subagents/* and <proj>/subagents/<sid>/* layouts), and funnels everything through the shared parseSessionFile + importSession pipeline — identical to live ingest. Re-imports are idempotent (session-ID dedup + baseline_* preservation). Extracts tokens, API errors, turn durations, thinking blocks, usage extras, and per-subagent breakdowns |

server/routes/import.js |

Express router for Import History. Four endpoints: GET /api/import/guide (OS-aware instructions + default-dir stats), POST /api/import/rescan (default ~/.claude/projects), POST /api/import/scan-path (arbitrary absolute dir with ~ expansion), POST /api/import/upload (multer multipart). Each request uses a per-request temp dir reclaimed in finally. Progress broadcast as throttled import.progress WebSocket messages. Limits tunable via CCAM_IMPORT_MAX_BYTES, CCAM_IMPORT_MAX_FILES, CCAM_IMPORT_MAX_EXTRACT_BYTES |

server/lib/archive.js |

Safe archive extraction: .zip via adm-zip, .tar/.tar.gz/.tgz via tar, plain .gz streaming via zlib. Every entry validated through safeJoin which rejects absolute paths and .. traversal before any bytes are written. Enforces a hard MAX_EXTRACT_BYTES cap (default 4 GB) with ExtractionLimitError surfaced as HTTP 413 — defense against zip/tar/gzip bombs |

scripts/seed.js |

Loads deterministic demo data for testing and demos |

scripts/clear-data.js |

Removes persisted rows while preserving schema |

Plugin Marketplace

The Agent Monitor ships with an official Claude Code plugin marketplace containing five production-ready plugins. These extend Claude Code with skills, agents, hooks, CLI tools, and MCP integration — all grounded in the real data model (token tracking with compaction baselines, cost calculation via pattern-matched pricing rules, workflow intelligence with 11 datasets per session, and session metadata including thinking blocks, turn counts, and inference geography).

Installation

# Add the marketplace

claude plugin marketplace add hoangsonww/Claude-Code-Agent-Monitor

# Install individual plugins

claude plugin install ccam-analytics@hoangsonww-claude-code-agent-monitor

claude plugin install ccam-productivity@hoangsonww-claude-code-agent-monitor

claude plugin install ccam-devtools@hoangsonww-claude-code-agent-monitor

claude plugin install ccam-insights@hoangsonww-claude-code-agent-monitor

claude plugin install ccam-dashboard@hoangsonww-claude-code-agent-monitorAvailable Plugins

| Plugin | Skills | Agent | CLI Tools | Focus |

|---|---|---|---|---|

| ccam-analytics | session-report, cost-breakdown, usage-trends, productivity-score | analytics-advisor | ccam-stats |

Token usage (4 types + baselines), cost via pricing engine, daily trends, productivity scoring |

| ccam-productivity | daily-standup, weekly-report, sprint-summary, workflow-optimizer | productivity-coach | — | Standup reports, sprint tracking, workflow optimization via 11 workflow intelligence datasets |

| ccam-devtools | session-debug, hook-diagnostics, data-export, health-check | issue-triager | ccam-doctor, ccam-export |

Session debugging, hook diagnostics, data export (JSON/CSV), system health |

| ccam-insights | pattern-detect, anomaly-alert, optimization-suggest, session-compare | insights-advisor | — | Pattern detection via tool flow transitions, anomaly alerting, optimization, session comparison |

| ccam-dashboard | dashboard-status, quick-stats | — | — | Dashboard connector with MCP integration and one-line metric summaries |

Skill Usage Examples

# Analytics — session report with per-model token breakdown + cost

/ccam-analytics:session-report latest

# Analytics — cost breakdown with cache efficiency analysis

/ccam-analytics:cost-breakdown this week

# Productivity — daily standup grouped by project

/ccam-productivity:daily-standup today

# Productivity — workflow optimization using workflow intelligence API

/ccam-productivity:workflow-optimizer analyze

# DevTools — debug a session's full event chain

/ccam-devtools:session-debug errors

# Insights — detect tool flow patterns and anti-patterns

/ccam-insights:pattern-detect tools

# Dashboard — one-line metrics summary

/ccam-dashboard:quick-statsCLI Tools

# Quick terminal dashboard

ccam-stats # Sessions, costs (per-model), tokens (with baselines)

ccam-stats --cost # Cost summary with matched pricing rules

ccam-stats --tokens # Token usage including compaction baselines

ccam-stats --json # Raw JSON output

# System diagnostics

ccam-doctor # Full diagnostic: API, endpoints, database, hooks, freshness

ccam-doctor --quick # Basic connectivity check

# Data export

ccam-export sessions --format csv --limit 500

ccam-export all --output backup.jsonPlugin Architecture

Each plugin follows the official Claude Code plugin specification. The marketplace manifest

at .claude-plugin/marketplace.json catalogs all five plugins. Each plugin

directory contains:

plugins/ccam-{name}/

├── .claude-plugin/plugin.json # Plugin manifest (name, version, description)

├── skills/{skill-name}/SKILL.md # Skill definitions with $ARGUMENTS

├── agents/{agent-name}.md # Agent definitions (model, tools, instructions)

├── hooks/hooks.json # Event hooks (fail-safe, non-blocking)

├── bin/{cli-tool} # CLI scripts (added to PATH)

├── .mcp.json # MCP server configuration (dashboard plugin)

└── settings.json # Plugin settings (dashboard plugin)Data Model Reference

All plugins query the Agent Monitor API at http://localhost:4820. Key

capabilities they leverage:

| Capability | Details |

|---|---|

| Token tracking | 4 types (input, output, cache_read, cache_write) + 4 compaction baselines per model per session |

| Cost calculation | (tokens / 1M) × rate_per_mtok for each type; longest pattern match wins |

| Session metadata | thinking_blocks, turn_count, total_turn_duration_ms, usage_extras (service_tier, speed, inference_geo) |

| Event types | PreToolUse, PostToolUse, Stop, SubagentStop, SessionStart, SessionEnd, Notification, Compaction, APIError, TurnDuration |

| Workflow intelligence | 11 datasets: stats, orchestration (DAG), toolFlow, effectiveness, patterns, modelDelegation, errorPropagation, concurrency, complexity, compaction, cooccurrence |

| Agent hierarchy | Recursive parent/child tree with subagent_type, depth tracking via recursive CTE |

📖 Full documentation: docs/plugins.md

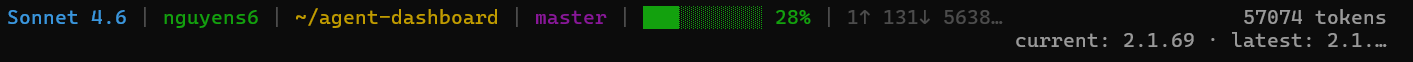

Statusline Utility

The statusline/ directory contains a standalone CLI statusline for Claude

Code — completely independent of the web dashboard. It renders a color-coded bar at the

bottom of the Claude Code terminal showing context window usage, per-direction token

counts, session cost in USD, and git branch.

nguyens6@host ~/agent-dashboard/client | Sonnet 4.6 | main | ████████░░ 79% | 3↑ 2↓ 156586c | $0.4231| Segment | Source | Color Logic |

|---|---|---|

| Model | data.model.display_name |

Always cyan |

| User | $USERNAME / $USER |

Always green |

| Working Dir | data.workspace.current_dir |

Always yellow, ~ prefix for home |

| Git Branch | git symbolic-ref --short HEAD |

Always magenta, hidden outside git repos |

| Context Bar | data.context_window.used_percentage |

Green < 50%, Yellow 50–79%, Red ≥ 80% |

| Token Counts | data.context_window.current_usage |

Green ↑ input, cyan ↓ output, dim c cache reads

|

| Session Cost | data.cost.total_cost_usd |

Green < $5, Yellow $5–$20, Red ≥ $20 (shown on API and subscription plans) |

Statusline rendering pipeline — invoked on each Claude Code update

Installation

Add this to ~/.claude/settings.json:

{

"statusLine": {

"type": "command",

"command": "bash \"/absolute/path/to/statusline/statusline-command.sh\""

}

}

The statusline uses only Python 3.6+ stdlib (sys, json,

os, subprocess). It fails silently on empty input or JSON

errors and never blocks Claude Code.

VS Code Extension

The Claude Code Agent Monitor is a premium, high-fidelity extension designed to minimize context switching for AI engineers. It brings the full power of the dashboard directly into VS Code, allowing you to monitor complex subagent orchestration without ever leaving your active code file.

Detailed Components

A dedicated Activity Bar view that performs background polling every 5 seconds. Includes a real-time Agent Health monitor tracking all 5 states (Working, Connected, Idle, Completed, Error) with native VS Code theme-aware icons and colors.

Aggregates data from multiple API endpoints to display high-signal metrics directly in the sidebar:

- Token Consumption: Scaled tracking from 1k to 1.0B+ tokens.

- Live Cost Estimates: Automatic USD cost calculation based on model pricing rules.

- Event Frequency: Total events, daily sessions, and subagent spawning rates.

Renders the full React application within a native webview tab. Supports Deep Linking: one-click jump from the sidebar directly to specific views like the Kanban Board, Analytics Hub, or your Last 10 Sessions.

Seamlessly scans ports 5173 (Vite Dev) and 4820 (Production)

on localhost. Automatically toggles between Online and Offline

modes in the sidebar as you start or stop your local server.

The extension is designed to be plug-and-play. Once your server is running, the extension automatically discovers the API and begins streaming telemetry — no manual URL configuration required.

📖 Full developer guide: vscode-extension/README.md

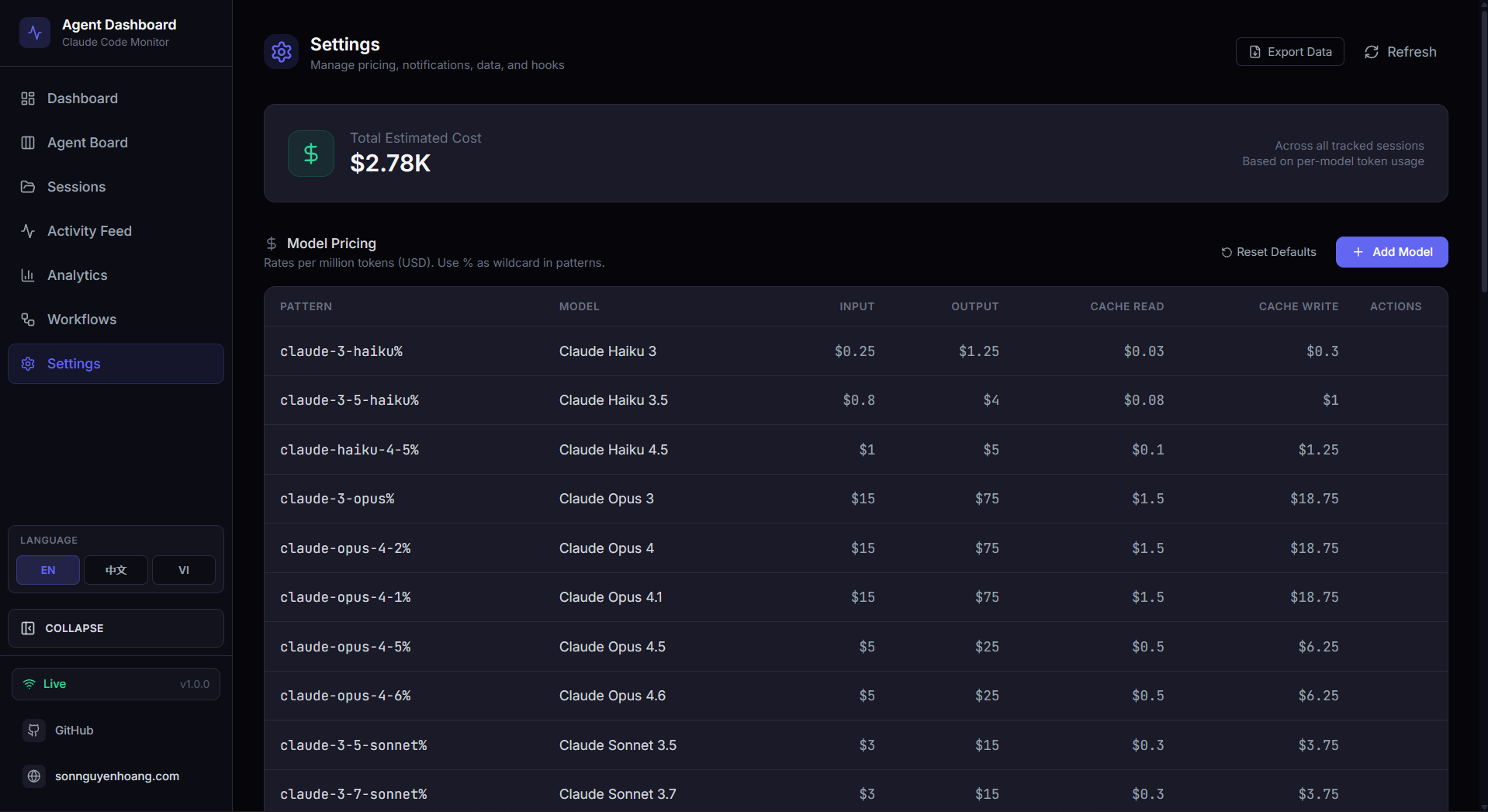

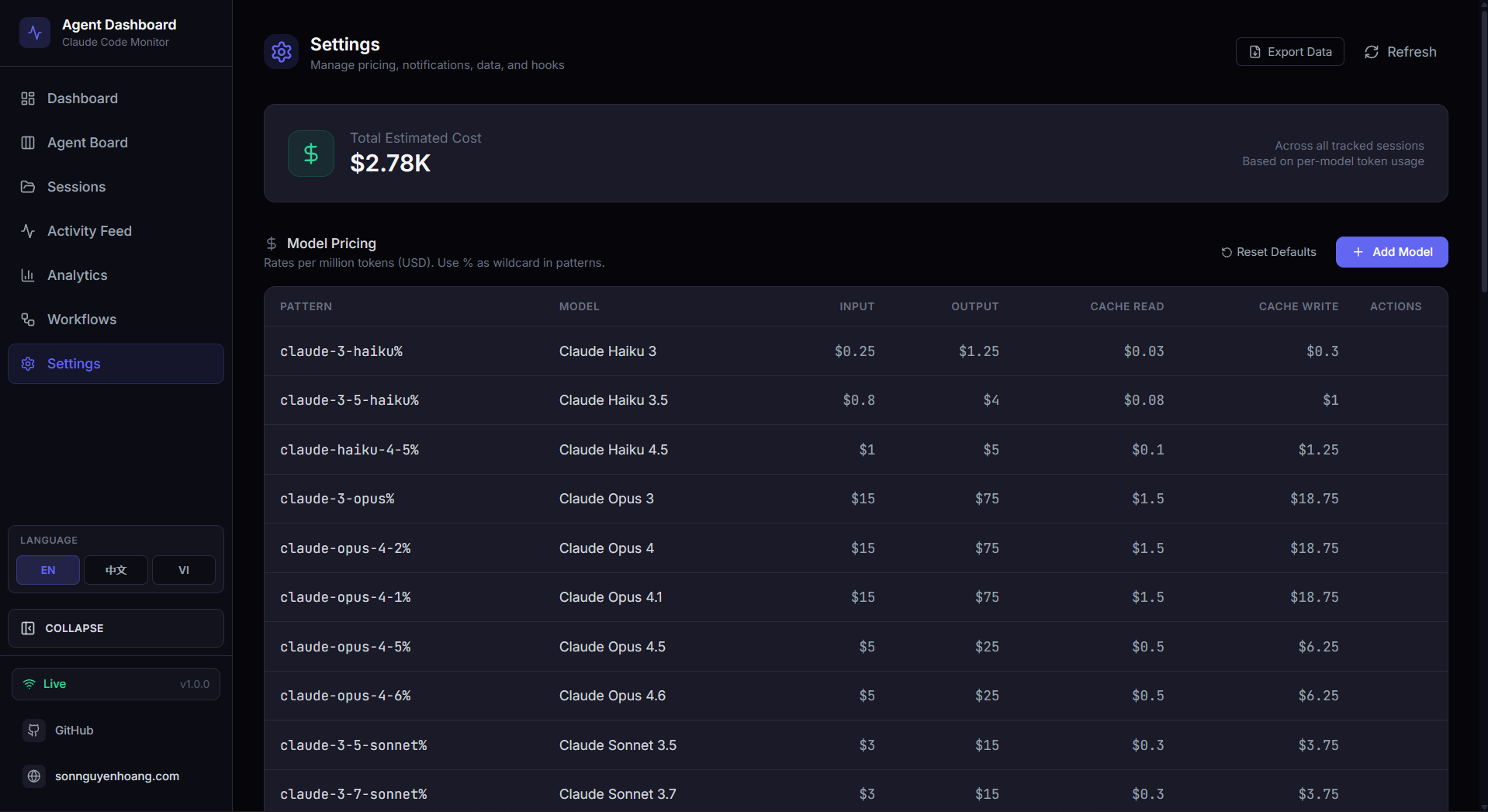

Settings Page

The /settings route provides a comprehensive management interface with

six sections:

Model Pricing

Editable table of per-model pricing rules. Each Claude model variant has its own

explicit pattern (e.g., claude-opus-4-6%). Rates cover input, output,

cache read, and cache write tokens. Reset to defaults or add custom models. The

section header carries an info popover (the i icon) that explains how

rule lookup works (first matching pattern wins), the SQL-style %

wildcard syntax with concrete examples (claude-opus-4-7%,

claude-%-haiku, exact ids), and reminds the user that prices must be

updated manually when Anthropic publishes new rates — already-stored sessions keep

the price applied at ingest time. The CLAUDE_HOME panel and Import History flow are

fully i18n-driven across en/vi/zh.

Hook Configuration

Shows per-hook installation status (SessionStart, PreToolUse, PostToolUse, Stop, SubagentStop, Notification, SessionEnd). One-click reinstall if hooks are missing or outdated. Validates paths and permissions automatically.

Data Management

View database row counts and size. Session cleanup: abandon stale active sessions after N hours, purge old completed sessions after N days. Danger zone: clear all data with confirmation dialog to prevent accidental loss.

Data Export

Download all sessions, agents, events, token usage, and pricing rules as a single JSON file for backup or analysis. Includes full event history, model metadata, and cost breakdowns in one portable archive.

System Health

Dedicated Health tab on the Dashboard with a composite health score (weighted from success rate, cache hit rate, error rate, and heap usage), storage engine donut chart, tool invocation frequency bars, subagent effectiveness, model token distribution, and compaction impact — all with cursor-following tooltips and 5-second auto-refresh.

Notification Preferences

Configure native browser notifications with per-event toggles for session starts, completions, errors, and subagent spawns. Automatic permission management with test-send button and graceful fallback when denied.

Each Claude model variant (e.g., Opus 4.6 vs Opus 4.1) has its own explicit pricing pattern because different model versions have different rates. The cost engine uses specificity sorting — longer patterns match before shorter ones.

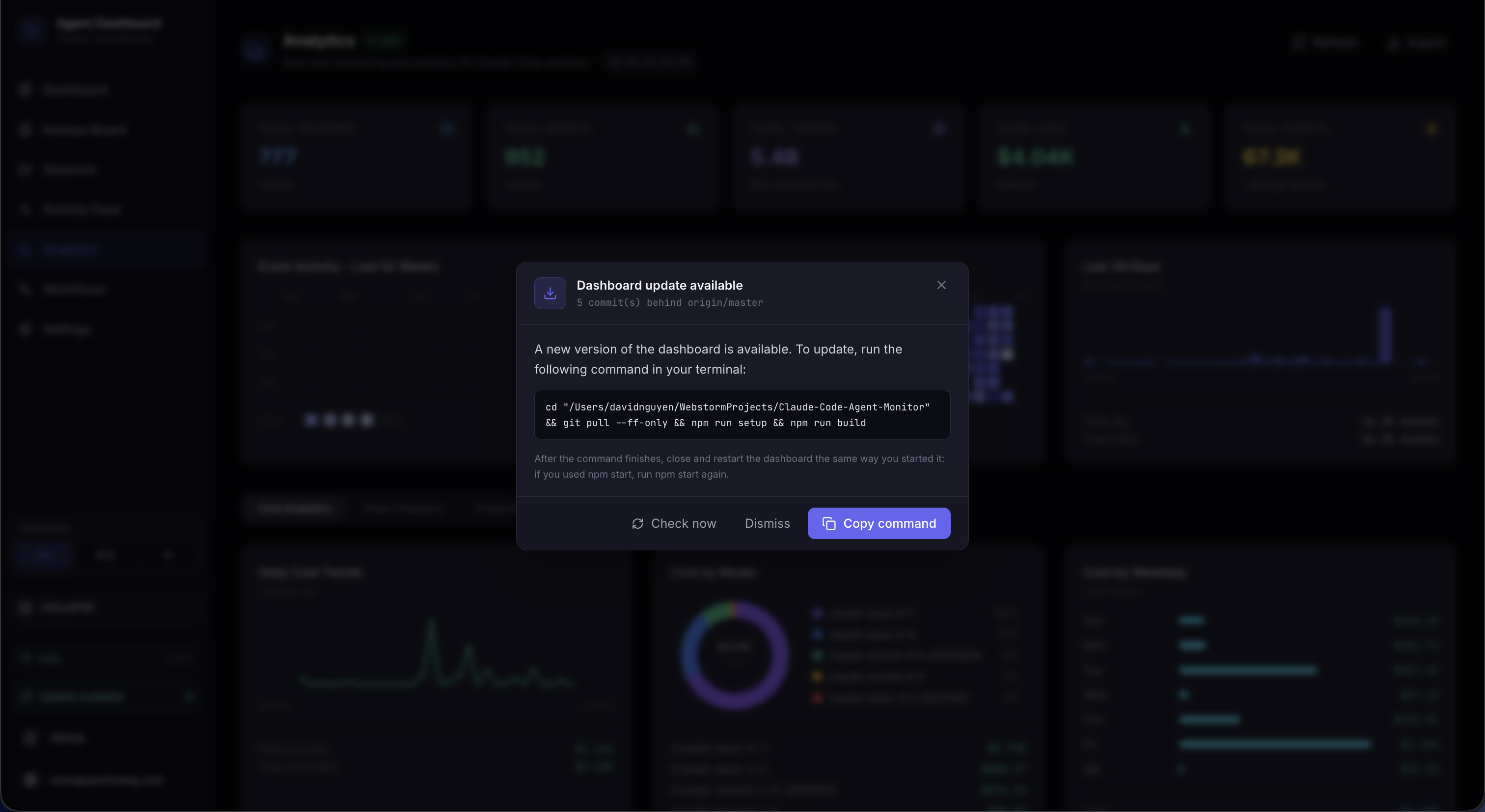

Update Notifier

A detection-only subsystem that tells the user when the dashboard's git checkout is

behind the canonical default branch. Branch- and fork-aware: if an

upstream remote is configured (the standard convention for forks), it

takes priority over origin; the chosen remote's

master / main / HEAD is the comparison ref.

The printed command adapts to the user's situation — git pull --ff-only

only when their branch actually tracks the canonical ref, otherwise

git fetch (with a fast-forward merge in the fork case). The server

never pulls or restarts itself — the user runs the command in a

terminal — so the mechanism cannot break dev sessions, pm2/systemd/launchd/Docker

supervision, or leave orphaned processes.

Detection pipeline from scheduler to UI

Non-Blocking Detection

A shell-less git fetch with a 120-second timeout, followed by a

rev-list against the tracked upstream. Each call runs from

server/lib/update-check.js and returns a structured payload —

never throws — so a flaky remote can't stall the dashboard.

5-min Scheduler

update-scheduler.js polls every five minutes with .unref()

timers so it never blocks shutdown, de-duplicates with a fingerprint over the

status payload, and announces up-to-date → behind transitions in a framed stdout

block. Disable entirely with DASHBOARD_UPDATE_CHECK=0.

Situation-Aware Command

Each status payload carries a manual_command shaped for the user's

actual situation: git pull --ff-only on a tracked canonical branch,

git fetch && git merge --ff-only for forks where local

tracks the wrong remote, and a plain git fetch on a feature branch

where pulling would update the wrong branch. Install / build steps are appended

only when the working tree is actually being rewritten.

Two UI Surfaces

A modal opens automatically when upstream is ahead; ESC or a backdrop click dismisses it. A persistent sidebar button stays in the footer — emerald when behind, amber when the last check errored — so users can always trigger a fresh check on demand.

Soft Failure Semantics

Non-git installs, no remotes configured, offline fetches, and unresolvable upstream refs all return tagged payloads instead of throwing. The sidebar badge turns amber on fetch errors and the modal stays suppressed until a successful check arrives — no spinners, no stuck state.

Dismissal Memory

Dismissal is keyed by the upstream SHA in localStorage, so closing

the modal silences it only for that commit — a newer upstream commit

re-opens it automatically. Clicking the sidebar button is an explicit intent

signal and clears the stored dismissal before firing a fresh check.

API Surface

| Endpoint | Purpose |

|---|---|

GET /api/updates/status |

Read-only check — runs git fetch, compares, returns the payload. |

POST /api/updates/check |

Same check, and broadcasts update_status over WebSocket so every connected client re-syncs at once. |

There is no POST /api/updates/apply and no in-process restart

helper. A process cannot reliably replace itself without an external supervisor,

and npm run dev, npm start, pm2, systemd, launchd, and

Docker each need different restart logic. Detection-only keeps the mechanism

portable across every supervisor and OS, and leaves the dashboard's lifecycle

owned by whatever started it. The user runs the printed command in their own

shell.

Deployment Modes

Development vs production deployment topology

| Aspect | Development | Production |

|---|---|---|

| Processes | 2 (Express + Vite) | 1 (Express only) |

| Client URL | http://localhost:5173 |

http://localhost:4820 |

| API proxy | Vite proxies /api + /ws to :4820 |

Same origin, no proxy |

| File watching | node --watch + Vite HMR |

None |

| Source maps | Inline | External files |

Container Runtime (Docker / Podman)

The production image is OCI-compatible and works with both Docker and Podman. The

server listens on 4820, reads legacy Claude history from a read-only mount,

and persists SQLite data under /app/data.

Container image build and runtime mounts

# Docker Compose

docker compose up -d --build

# Podman Compose

CLAUDE_HOME="$HOME/.claude" podman compose up -d --build

# Stop the stack

docker compose down

# or

podman compose down| Mount | Purpose |

|---|---|

~/.claude:/root/.claude:ro |

Read historical Claude session files for import without modifying them |

agent-monitor-data:/app/data |

Persist the SQLite database across rebuilds and container restarts |

Claude Code fires hooks from the host machine, not from inside the container. After

the container is healthy on http://localhost:4820, run

npm run install-hooks on the host so hook events post back to the

containerized server.

Docker / Podman

A multi-stage Dockerfile and docker-compose.yml are included.

Both Docker and Podman are fully supported — the image is OCI-compliant.

Quick Start

# Docker Compose

docker compose up -d --build

# Podman Compose

CLAUDE_HOME="$HOME/.claude" podman compose up -d --buildPlain Docker / Podman (no Compose)

# Build the image

docker build -t agent-monitor .

# — or —

podman build -t agent-monitor .

# Run the container

docker run -d --name agent-monitor \

-p 4820:4820 \

-v "$HOME/.claude:/root/.claude:ro" \

-v agent-monitor-data:/app/data \

agent-monitorVolume Mounts

| Mount | Purpose |

|---|---|

~/.claude:/root/.claude:ro |

Read-only access to legacy session history for automatic import on startup |

agent-monitor-data:/app/data |

Persists the SQLite database across container restarts |

Multi-Stage Build

The Dockerfile uses three stages to minimize the final image size:

| Stage | Purpose |

|---|---|

| server-deps | Installs production node_modules on node:22-alpine. better-sqlite3 is optional — if prebuilds are unavailable, the server falls back to built-in node:sqlite |

| client-build | Runs npm ci + vite build to produce optimized static assets |

| runtime | Clean node:22-alpine with only node_modules, server code, and client/dist |

Claude Code hooks run on the host, not inside the container. The containerized server

receives hook events via HTTP on localhost:4820. Run

npm run install-hooks on the host after starting the container.

Performance Characteristics

| Metric | Value | Notes |

|---|---|---|

| Server startup | < 200ms | SQLite opens instantly; schema migration is idempotent |

| Hook latency | < 50ms | Transaction + broadcast, no async I/O beyond SQLite |

| Client JS bundle | 200 KB / 63 KB gzip | CSS: 17 KB / 4 KB gzip |

| WebSocket latency | < 5ms | Local loopback, JSON serialization only |

| SQLite write throughput | ~50,000 inserts/sec | WAL mode on SSD; far exceeds any hook event rate |

| Max events before slowdown | ~1M rows | Pagination prevents full-table scans |

| Server memory | ~30 MB | SQLite in-process, no ORM overhead |

| Client memory | ~15 MB | React + Tailwind, minimal runtime deps |

Security Considerations

| Area | Approach |

|---|---|

| SQL injection | All queries use prepared statements with parameterized values — no string interpolation |

| Request size | Express JSON body parser limited to 1MB |

| Input validation | Required fields checked before DB operations; CHECK constraints on status enums |

| Hook safety |

Hook handler always exits 0; 5s max lifetime; uses 127.0.0.1 not

external hosts

|

| CORS | Enabled for development; in production same-origin (Express serves the client) |

| Authentication | Intentionally none — local dev tool. Restrict via firewall if exposing on LAN. |

| Secrets | No API keys, tokens, or credentials stored or transmitted anywhere |

| Dependency surface | 5 runtime server deps, 6 runtime client deps (includes D3.js for Workflows) — minimal attack surface |

Troubleshooting

No sessions appearing after starting Claude Code

Check 1 — Is the server running?

curl http://localhost:4820/api/health

# Expected: {"status":"ok","timestamp":"..."}Check 2 — Are hooks installed?

# Open ~/.claude/settings.json and confirm it contains "hook-handler.js"

# If not, re-run:

npm run install-hooksCheck 3 — Start a new Claude Code session

Hooks only apply to sessions started after installation. Restart Claude Code after starting the dashboard.

Check 4 — Is Node.js in PATH?

On some systems the shell environment when Claude Code fires hooks may not include the

full PATH. Test with node --version. If not found, use the absolute path to

node in the hook command.

Common Issues

| Problem | Solution |

|---|---|

better-sqlite3 errors during install |

This is non-fatal — better-sqlite3 is an optional dependency. On

Node 22+ the server automatically falls back to built-in

node:sqlite. On older Node versions, install Python 3 + C++ build

tools, then run npm rebuild better-sqlite3.

|

| Dashboard shows "Disconnected" |

Server is not running. Start it with npm run dev. Client

auto-reconnects every 2s.

|

| Events Today shows 0 | Ensure you are on the latest version (timezone bug was fixed). Restart the server. |

| Port 4820 already in use |

Run DASHBOARD_PORT=4821 npm run dev, update Vite proxy in

client/vite.config.ts, and re-run

npm run install-hooks.

|

| Stale seed data shown | Run npm run clear-data to wipe all rows, then restart. |

| Hooks show validation error about matcher |

Ensure you're on the latest version — the hook format was updated to use

matcher: "*" string (not object).

|

| "SQLite backend not available" on startup |

Neither better-sqlite3 nor node:sqlite could load.

Upgrade to Node.js 22+ (recommended), or install Python 3 + C++ build tools

and run npm rebuild better-sqlite3.

|

| Docker container runs but no sessions appear |

Hooks run on the host, not inside the container. Run

npm run install-hooks on the host after the container starts.

Verify hooks in ~/.claude/settings.json point to

localhost:4820.

|

Technology Choices

| Technology | Why This Over Alternatives |

|---|---|

| SQLite (better-sqlite3 / node:sqlite) |

Zero-config, embedded, no server process. WAL mode gives concurrent reads.

Synchronous API is simpler than async for this use case.

better-sqlite3 is preferred when prebuilds are available; falls back

to Node.js built-in node:sqlite on Node 22+ when the native module

cannot be compiled.

|

| Express |

Battle-tested, minimal, well-understood. Fastify would be overkill; raw

http module would require too much boilerplate for routing.

|

| ws | Fastest, most lightweight WebSocket library for Node. No Socket.IO overhead needed — we only push typed JSON messages one-way. |

| React 18 | Stable, widely known, strong TypeScript support. No Server Components or RSC needed for a client-rendered local SPA. |

| Vite 6 | Fast builds, native ESM, excellent dev experience. Proxy config handles the dev server split cleanly with no ejection. |

| Tailwind CSS | Utility-first approach keeps styles colocated with markup. No CSS module boilerplate. Custom dark theme config for the dark UI. |

| React Router 6 |

Standard routing for React SPAs. Layout routes with

<Outlet> give clean shell composition without prop drilling.

|

| Lucide React | Tree-shakeable icon library — only imports what's used (~20 icons). No heavy icon font. |

| TypeScript Strict |

Catches null/undefined bugs at compile time.

noUncheckedIndexedAccess prevents array bounds issues in analytics

aggregations.

|

| D3.js + d3-sankey | Industry-standard data visualization library. Powers the Workflows page's 11 interactive sections — DAG layouts, Sankey diagrams, force-directed graphs, bubble charts, and swim-lane timelines. No wrapper libraries needed; direct SVG rendering keeps bundle impact minimal. |

| Python (statusline) | Available on virtually all systems. Handles ANSI and JSON natively with stdlib only. No install step required. |