LLM-as-judge harness

Every briefing gets graded on five quality axes — factuality, novelty, source diversity, signal density, and coherence — so silent regressions stop slipping past your readers.

Catch prompt drift the day it happens. Block bad cards before they publish. See trends, not just the latest result.

flowchart LR

CARD["example-cards/<date>-card.json"] --> EX["extract.py\ntext + headlines + URLs"]

PRIOR["Prior 7 days\nof cards"] --> EX

EX --> JU["judge.py\nstub / claude / codex / gemini"]

JU --> RUN["runner.py\nscore | backfill | regression"]

RUN --> DB[("eval/store.sqlite")]

DB --> DR["drift.py\n7d vs 30d median ± MAD"]

DB --> RP["report.py\nweekly Markdown"]

RUN -- "--gate" --> PUB{"composite ≥ 3.0?"}

PUB -- yes --> OK["publish proceeds"]

PUB -- no --> BLOCK["abort, log composite"]

| Axis | Weight | What it measures |

|---|---|---|

factuality | 0.30 | Concrete claims map to cited sources (hard cap 2 if no sources) |

novelty | 0.20 | New vs. prior 7-day window of cards |

source_diversity | 0.15 | Distinct domains, primary + secondary mix |

signal_density | 0.20 | Numbers, named entities, concrete outcomes per item |

coherence | 0.15 | Thematic grouping with takeaways, not bullet soup |

# Score one card with the real Claude Haiku judge make eval D=2026-03-18 JUDGE=claude # Score every card under example-cards/ in parallel (4 workers) make eval-backfill JUDGE=claude # Re-score golden set, fail if any drops > 0.5 composite points make eval-regression JUDGE=claude # Drift check: 7d median vs 30d median ± MAD, exit 3 on alert make eval-drift D=2026-03-18 ALERT_EXIT=1 # Weekly Markdown report make eval-report D=2026-03-18 W=7 OUT=logs/eval-week.md # Interactive offline dashboard (Chart.js trend + radar + histogram + table) make eval-dashboard OPEN=1 # Optional publish gate (exit 2 if composite < 3.0) python3 eval/runner.py score --date "$DATE" --judge claude --gate

| Component | Role |

|---|---|

eval/rubric.md | 5-axis definitions, weights, pass thresholds (publish gate, regression gate, drift alert) |

eval/judge_prompt.md | Versioned judge prompt (v1). PROMPT_VERSION is part of the store key. |

eval/extract.py | Adaptive-card JSON → flat text + headlines + source URLs; pulls prior 7 days for novelty |

eval/judge.py | Backends: stub (offline heuristic), claude, codex, gemini. Per-call trace to logs/eval-judge-YYYY-MM-DD.log. |

eval/store.py + schema.sql | SQLite, idempotent upsert on (card_date, prompt_version, judge_model) |

eval/runner.py | CLI: score / backfill (parallel --workers) / regression / show, with optional --gate |

eval/drift.py | Median + MAD-based z-score across rolling windows; robust to small-sample outliers |

eval/report.py | Weekly Markdown digest: coverage, composite stats, axis medians, per-day table |

eval/seed_golden.py | Re-baseliner. Lifts store rows into golden/ after a fresh backfill. |

eval/export_dashboard.py | Serializes store.sqlite + golden/ into dashboard/data.js for the offline UI. |

eval/golden/*.json | Pinned baseline composites for regression gate |

eval/dashboard/ | Single-file offline UI (index.html + data.js) — trend, radar, histogram, stacked bars, sortable card table. |

eval/tests/ | 10 unittest cases against the stub backend - make eval-test |

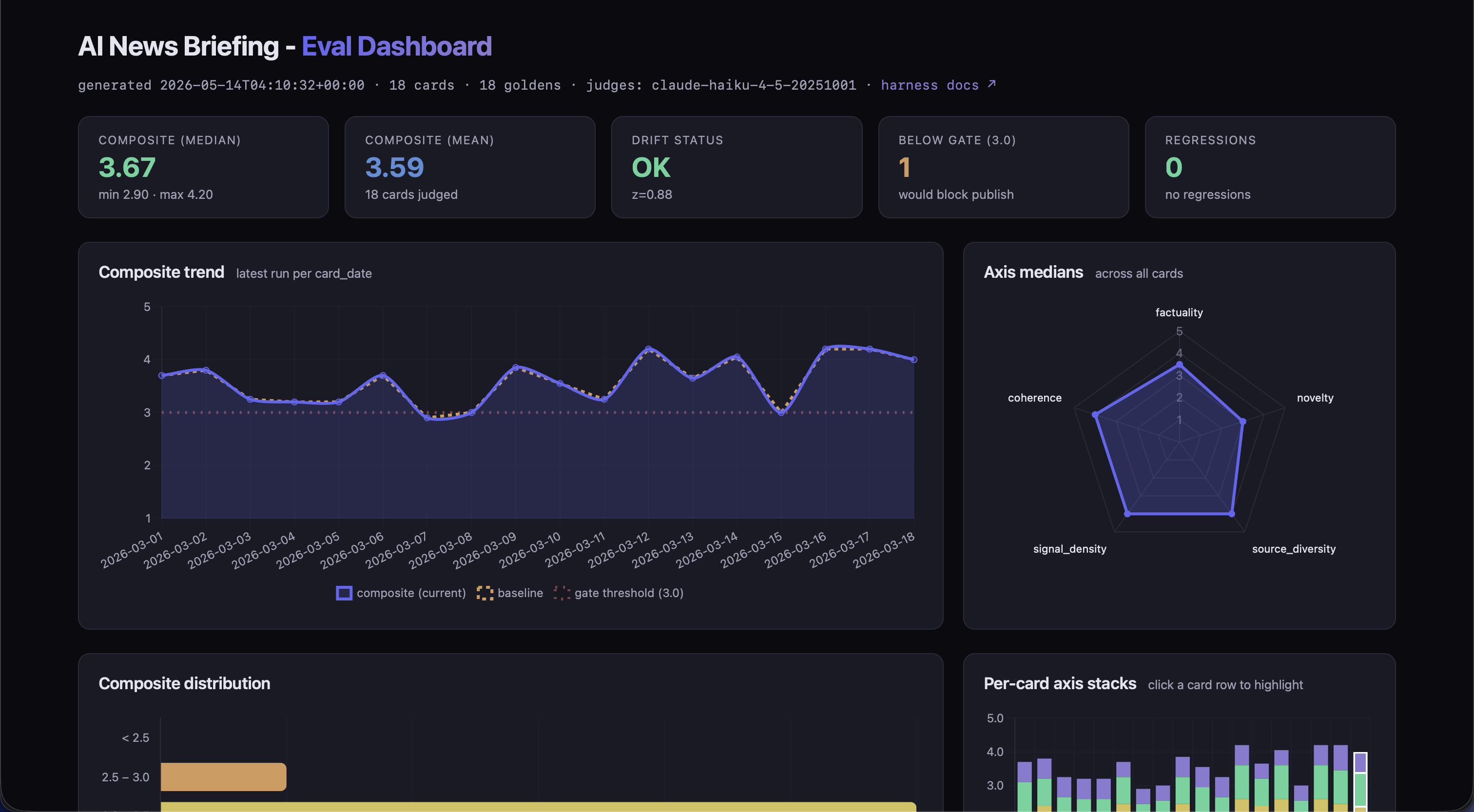

Interactive dashboard

eval/dashboard/index.html is a single-file, offline-renderable UI over eval/store.sqlite + eval/golden/.

make eval-dashboard serializes the store into data.js (window.EVAL_DATA); Chart.js renders the

trend, radar, histogram, and per-card stacked bars. The table is sortable, filterable, and searchable; clicking a row populates

a detail panel and highlights that card in the stack chart. No backend, no build step.

flowchart LR

DB[("eval/store.sqlite")]

GD["eval/golden/*.json"]

EX["export_dashboard.py

--judge filter

--open"]

JS["eval/dashboard/data.js

window.EVAL_DATA"]

HTML["eval/dashboard/index.html

Chart.js + vanilla JS

works over file://"]

DB --> EX

GD --> EX

EX --> JS --> HTML